Website migrations are among the most complex and high-risk SEO operations. Whether it’s a domain change, CMS switch, redesign, HTTPS implementation or structural overhaul, even small mistakes in the website migration process can lead to traffic drops, indexing issues and long-term revenue loss. That’s why having a clear, structured website migration checklist – and the right technical visibility – is essential.

The “Zero Traffic Loss Migration Checklist” by JetOctopus outlines a practical, data-driven framework for planning and executing migrations without sacrificing organic performance. More importantly, it demonstrates how a powerful enterprise-grade crawler supports SEOs and developers at every stage of a seo website migration checklist, from pre-migration audits to post-launch validation and monitoring.

Below is a detailed summary of how the checklist works – and how JetOctopus enables safe, measurable and controlled website migrations.

Why Website Migrations Are So Risky

A site migration process affects multiple SEO-critical elements at once:

- URL structures

- Internal linking

- Redirect logic

- Canonicalization

- Indexability rules

- XML sitemaps

- Server responses

- Content rendering

Without full crawl visibility and log-level insights, it’s nearly impossible to detect hidden issues before they impact rankings. That’s why the checklist emphasizes preparation, benchmarking, validation and continuous monitoring – all powered by a scalable seo crawler designed for large and complex sites.

Below is a structured breakdown of the core website migration steps, along with how JetOctopus supports each phase.

1. Pre-Migration Audit and Benchmarking

Before touching production, you must fully understand the current site.

Key actions include:

- Crawl the entire existing website to create a complete URL inventory.

- Identify all indexable pages, noindex pages and canonicalized URLs.

- Extract internal linking structure and detect orphan pages.

- Analyze status codes and redirect chains.

- Export metadata (titles, descriptions, H1s, canonicals).

- Establish traffic benchmarks for top-performing URLs.

- Identify high-value pages requiring special migration care.

How JetOctopus helps:

- Performs ultra-fast, large-scale crawling to map every URL.

- Segments pages by indexability, status code, depth and directives.

- Visualizes site structure to detect crawl depth risks.

- Provides exportable datasets for redirect mapping.

- Integrates crawl and log data for understanding real bot behavior.

For SEOs and developers, this creates a reliable baseline – the foundation of any professional seo migration plan.

2. Redirect Mapping and Validation

Redirect errors are the #1 cause of traffic loss during a website migration project.

The checklist emphasizes:

- Creating a one-to-one redirect map for all legacy URLs.

- Avoiding redirect chains and loops.

- Ensuring 301 (not 302) status codes for permanent changes.

- Preserving query parameters when necessary.

- Validating canonical consistency post-redirect.

- Testing redirect logic in staging before go-live.

How JetOctopus helps:

- Bulk-tests redirect chains at scale.

- Detects broken redirects and unexpected 404s.

- Identifies temporary redirects that should be permanent.

- Flags mismatched canonical tags.

- Compares pre- and post-migration crawl datasets to verify redirect accuracy.

This level of automation significantly reduces human error in the site migration checklist phase.

3. Internal Linking and Architecture Validation

When URLs change, internal links often break silently.

The migration guide stresses:

- Updating all internal links to new URLs (no reliance on redirects).

- Maintaining logical site depth and crawl paths.

- Avoiding orphaned pages.

- Preserving navigation hierarchy.

- Rechecking breadcrumb structures.

- Validating internal link equity distribution.

How JetOctopus helps:

- Detects internal links pointing to redirected or broken pages.

- Identifies orphan pages via crawl and log data comparison.

- Visualizes internal linking patterns.

- Measures crawl depth and structural changes.

- Compares internal link counts before and after migration.

For dev teams, this is critical in complex CMS migrations or large e-commerce website migration projects.

4. Indexability and Technical Signals

During a website migration seo checklist execution, indexation errors can appear unexpectedly:

- Accidental noindex tags

- Incorrect canonical references

- Robots.txt blocking key sections

- Meta refresh redirects

- Mixed HTTP/HTTPS signals

- Checklist essentials include:

- Validate robots.txt rules.

- Ensure no unintended noindex directives.

- Confirm canonical tags reflect new URLs.

- Check hreflang implementation.

- Validate sitemap accuracy.

- Confirm server responses are correct (200, 301).

How JetOctopus helps:

- Crawls staging environments to detect blocked resources.

- Flags noindex and canonical inconsistencies.

- Analyzes hreflang clusters.

- Detects blocked URLs via robots.txt parsing.

- Monitors XML sitemaps and compares them to crawl data.

This proactive validation protects index stability during a seo site migration checklist rollout.

5. Post-Launch Monitoring and Bot Behavior

Launching the new site is only half the battle. The checklist emphasizes real-time validation after go-live.

Post-launch priorities include:

- Re-crawl the new website immediately.

- Compare crawl data with pre-migration benchmarks.

- Monitor 404 errors and redirect failures.

- Analyze Googlebot behavior through logs.

- Track indexation patterns.

- Identify unexpected crawl spikes or drops.

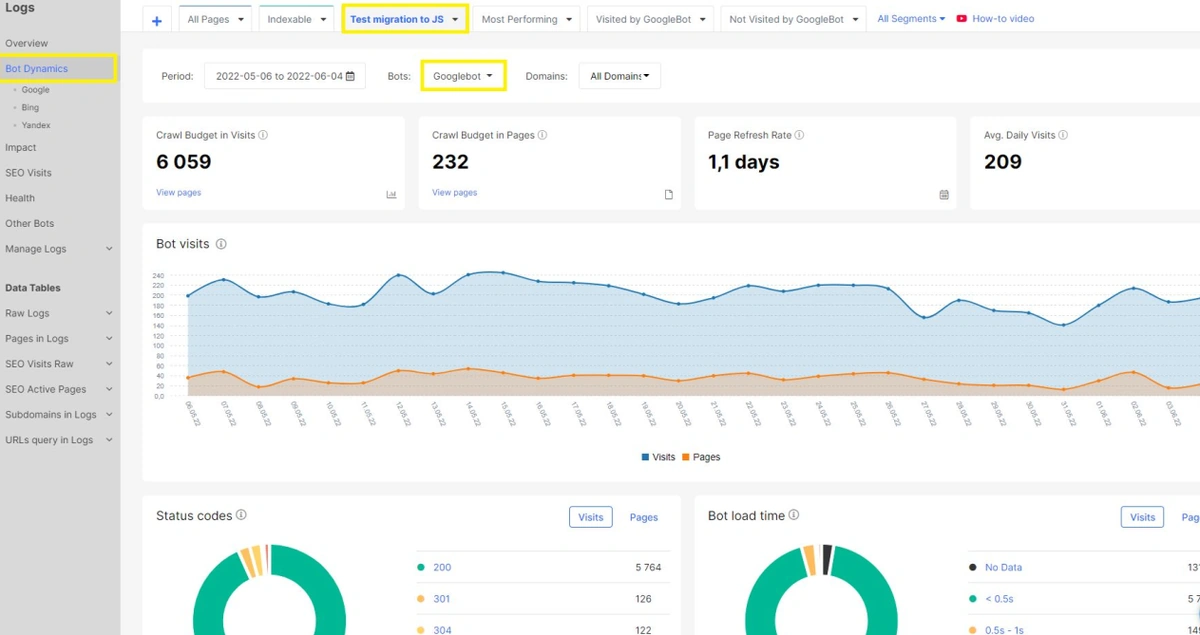

How JetOctopus helps:

- Combines crawler data with log file analysis.

- Shows how Googlebot interacts with new URLs.

- Detects crawl budget waste.

- Highlights non-visited important pages.

- Enables fast troubleshooting before rankings drop.

- For SEO and dev collaboration, this unified view eliminates guesswork in the early days of a migration website checklist deployment.

Benefits of Using JetOctopus During Website Migrations

Beyond the checklist itself, the tool offers tangible advantages for teams handling complex migrations.

Here are the core benefits:

- Enterprise-Level Scalability. Crawl millions of pages without performance bottlenecks – ideal for large e-commerce and media platforms.

- Crawl + Log Integration. Unlike standard crawlers, JetOctopus merges technical crawl data with server log insights for true search bot visibility.

- Advanced Segmentation. Filter URLs by status code, indexability, depth, canonical type, parameter usage and more – critical in a site migration plan.

- Before-and-After Comparison. Compare two crawls to instantly detect structural changes, missing pages or redirect gaps.

- Bot Behavior Analysis. Understand how Googlebot prioritizes new URLs post-migration – crucial for protecting crawl budget.

- Data-Driven Risk Reduction. Replace manual checks with automated validation across thousands or millions of URLs.

- Developer-Friendly Reporting. Export structured datasets for implementation teams, making collaboration seamless.

- Faster Troubleshooting. Identify migration issues within hours instead of weeks.

For SEO professionals and developers executing a website migration guide, these capabilities dramatically reduce uncertainty and operational risk.

The Strategic Role of a Data-Driven Migration Plan

The core message of the Zero Traffic Loss approach is simple: successful migrations are not about luck – they’re about preparation, validation and monitoring.

A strong website migration plan includes:

- Complete URL inventory

- Detailed redirect mapping

- Internal link updates

- Indexability validation

- Post-launch crawl comparison

- Log-based bot monitoring

JetOctopus acts as the technical backbone throughout this entire lifecycle.

Instead of relying on partial audits or limited crawls, teams gain:

- Full-scale visibility

- Real-time diagnostics

- Reliable baseline comparisons

- Actionable insights for both SEO and engineering teams

Conclusion

Website migrations are inherently risky, but traffic loss is not inevitable. By following a structured checklist for website migration and using a powerful crawling and log analysis platform, teams can protect rankings, maintain indexation stability and even improve site performance during the transition.

The Zero Traffic Loss Migration Checklist demonstrates that with the right preparation and the right tooling, migrations can be executed confidently and systematically. For SEO specialists and developers managing a complex seo website migration checklist, JetOctopus provides the depth, speed and analytical precision needed to safeguard organic traffic at every step.

When planning your next website migration project, a data-driven approach supported by enterprise crawling and bot monitoring isn’t just helpful – it’s essential.