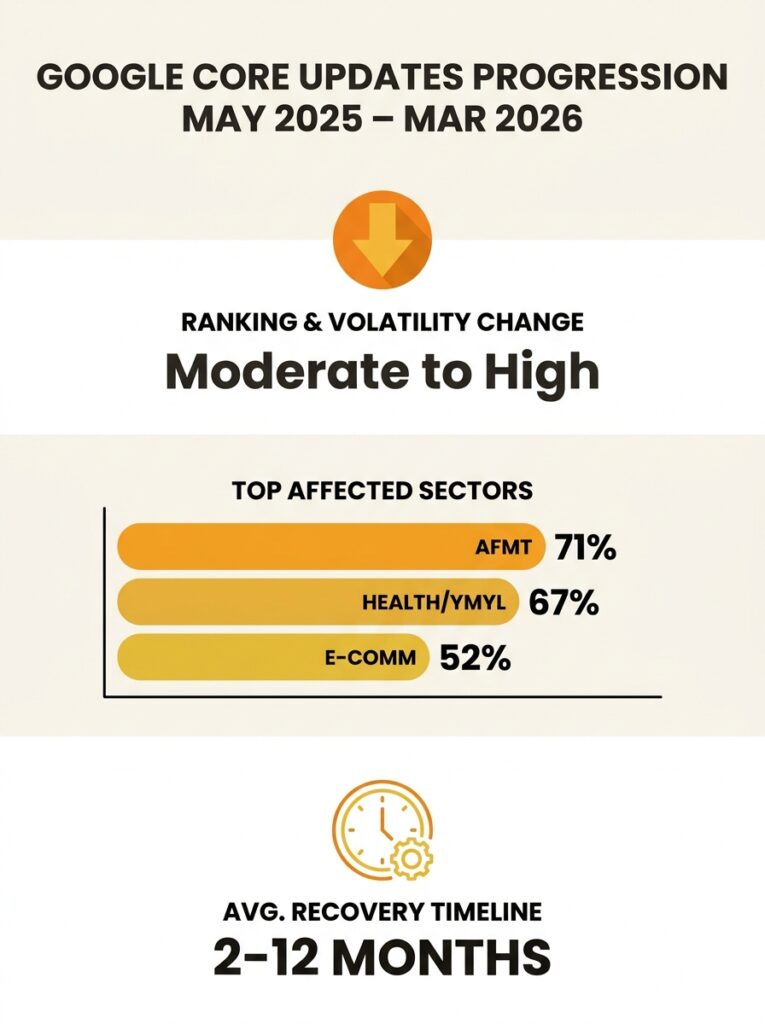

Nearly 80% of URLs in the top three positions changed their rankings during Google’s March 2026 core update, according to volatility analysis from Quasa. Around 24% of pages previously sitting in the top 10 dropped out of the top 100 entirely. Compare that to the December 2025 update, which reshuffled 67% of top-three positions, and the trajectory is clear: each successive update is hitting harder than the one before. For Australian businesses that generate leads and revenue through organic search, these numbers translate into real dollars vanishing from the pipeline in a matter of days.

The instinct after a traffic collapse is to scramble. Rewrite pages. Disavow links. Publish more content. But panic-driven fixes often make things worse, because they treat symptoms instead of structural problems. The businesses that recover fastest tend to be the ones that understood their vulnerabilities before the update landed, and the ones that treat Google core update recovery as an ongoing discipline rather than an emergency response.

The Pattern Behind Traffic Collapses

There’s a common assumption that core updates are random, that Google changes something mysterious and you either get lucky or you don’t. The data from the past eighteen months tells a different story. Each update since mid-2025 has tightened the same set of screws: behavioural signals, content depth, technical performance, and demonstrated expertise. The December 2025 update weighted dwell time, return visits, and what Google internally calls “User Journey Completion,” which measures whether a searcher bounces back to the SERP after clicking your page. Sites that answered only part of a query got penalised. Sites that answered the full scope of the question, including pricing, logistics, and specifications, held their ground.

The March 2026 update intensified this pattern. Affiliate sites and thin content farms were hit hardest, but plenty of legitimate Australian businesses got caught in the crossfire because their content was structurally shallow even if it looked professional on the surface. A well-designed page with 600 words of generic copy about “our services” doesn’t satisfy the behavioural signals Google now prioritises. The user lands, doesn’t find the depth they need, and bounces. Enough of that behaviour accumulates and the algorithm notices.

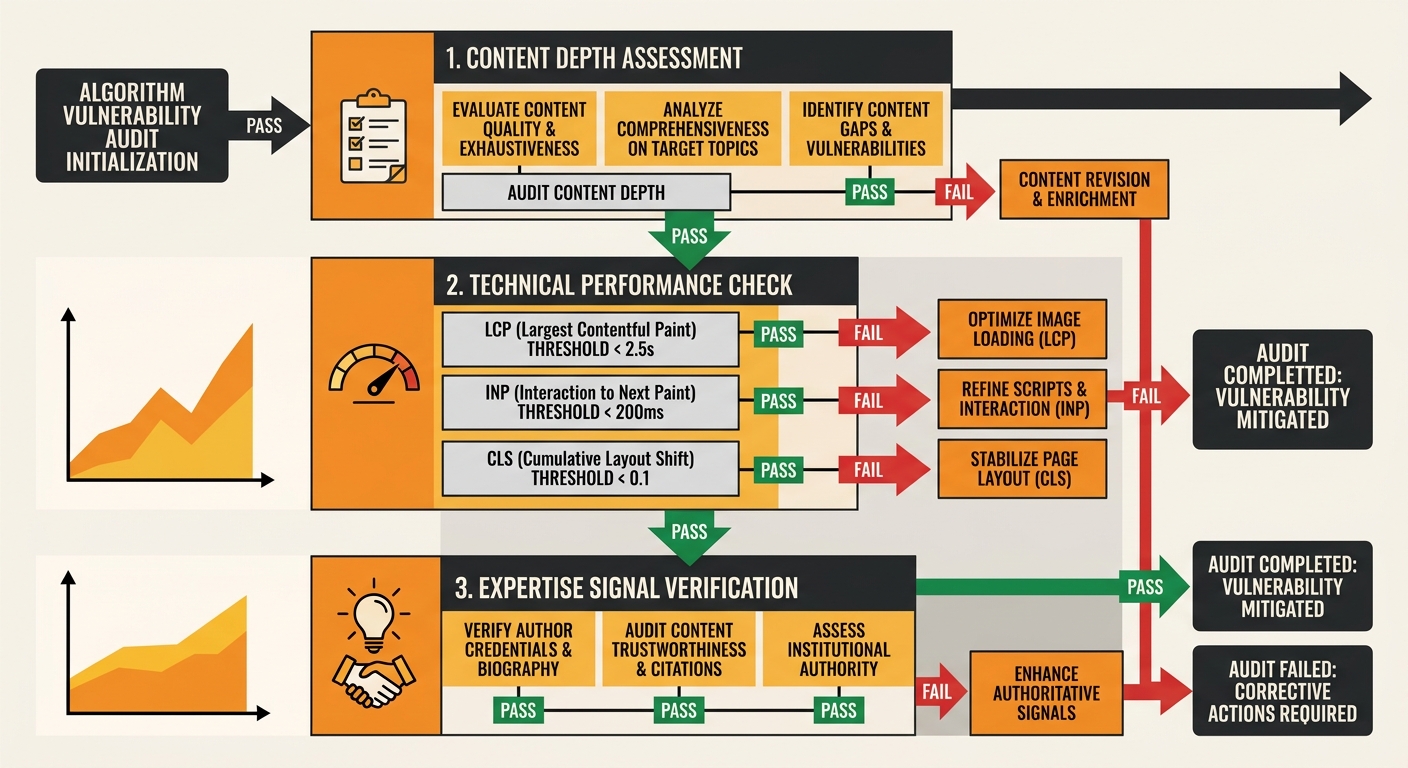

What makes this frustrating for site owners is that the warning signs often appear weeks before the major traffic drop. Ranking volatility patterns that extend beyond normal daily movement can signal algorithmic pressure or structural weakness, but most businesses don’t have the monitoring cadence to catch these early signals. If you’re reviewing performance monthly, you’re seeing the aftermath, not the onset. We’ve written about why building a more frequent monitoring rhythm matters for exactly this reason. By the time a monthly report shows the damage, you’re already weeks behind on diagnosis.

What an Algorithm Update Vulnerability Audit Looks Like in Practice

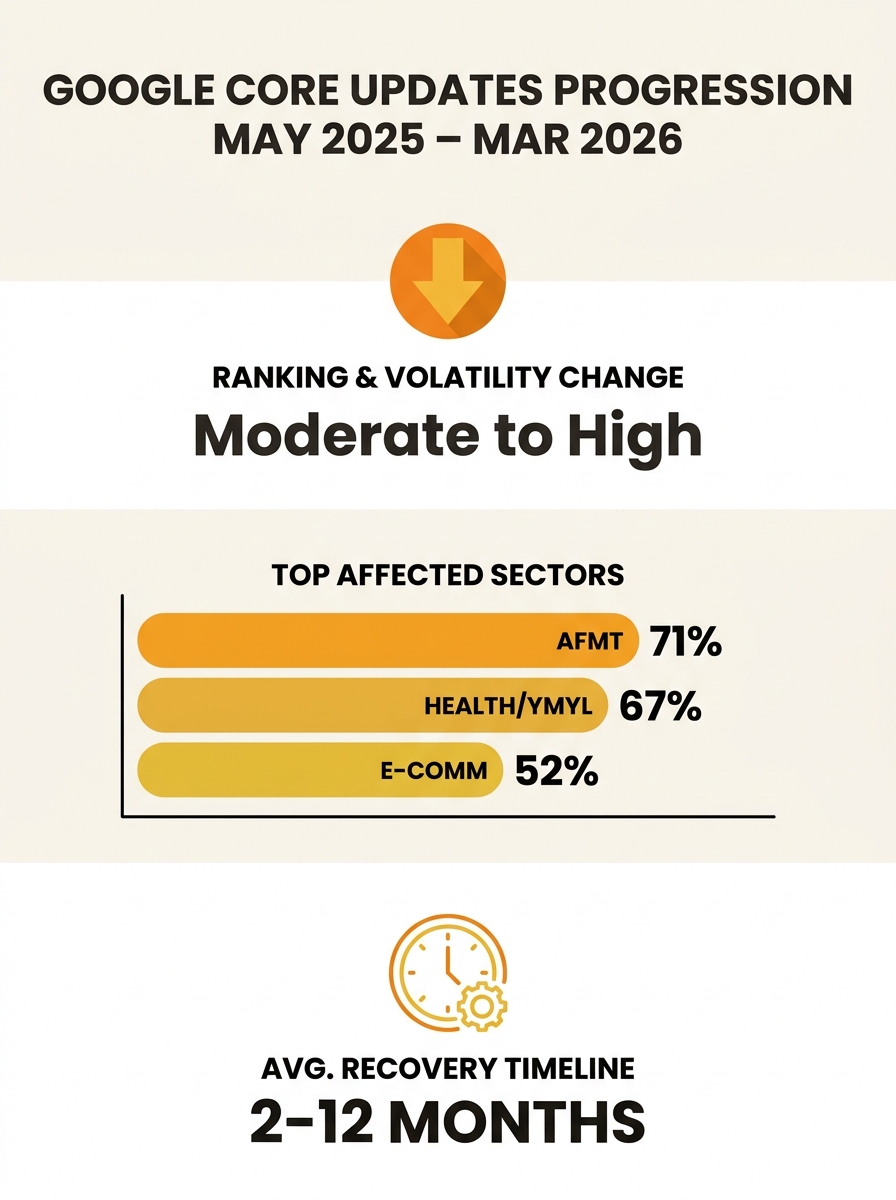

The phrase “algorithm update vulnerability audit” sounds corporate and heavy, but the actual work is closer to honest self-assessment. You’re looking at your site through the lens of what Google has rewarded and punished in recent updates, and you’re identifying the gaps before the next one arrives. This is traffic loss prevention in its most practical form: finding the weak points while you still have rankings to protect.

Start with content depth. Pull up your top 20 organic landing pages and ask whether each one genuinely completes the user’s query. If someone searching “commercial electrician Sydney” lands on your page, can they find service areas, rough pricing guidance, licensing information, and a clear next step, all without hitting the back button? The December 2025 and March 2026 updates both punished pages that forced users to hunt across multiple tabs for basic information. If your competitors are answering the full question on a single page and you’re splitting it across four, you’re vulnerable.

Then look at technical performance. Sites with a Largest Contentful Paint above 3.0 seconds experienced 23% more traffic loss in the December 2025 update. Poor Interaction to Next Paint scores, above 300 milliseconds, correlated with 31% greater losses, particularly on mobile. These aren’t abstract metrics. They directly affect whether a user stays on your page long enough for Google to register a positive behavioural signal. If you’ve been accumulating technical SEO debt through years of patches and plugin bloat, a core update is where that debt comes due.

The businesses that recover fastest tend to be the ones that understood their vulnerabilities before the update landed.

The third layer is expertise signals. Google now actively verifies whether content creators possess real-world credentials, especially in YMYL categories like health, finance, and legal services. Anonymous blog posts or pages without author attribution are being systematically demoted. For Australian professional services firms, this means your team page, author bios, and credentials linked from content pages are ranking factors in a way they weren’t two years ago. If your dentist’s blog doesn’t name the dentist or link to their AHPRA registration, that’s a gap the algorithm can see.

Building SEO Strategy Resilience That Survives the Next Update

Recovery from a single update is achievable. Small businesses that recovered from the December 2025 core update did so by improving E-E-A-T signals, fixing Core Web Vitals, and restructuring content around complete topic coverage. Most saw positive movement within three to four weeks of implementing fixes, with full recovery within two to four months. That’s encouraging, but the real question is how to avoid going through the same cycle with every subsequent update.

A proper ranking recovery framework treats each update as data, not disaster. When rankings shift, the first step is diagnosis: which pages dropped, what queries were affected, and whether the losses correlate with content quality, technical issues, or link profile problems. Google Search Console remains the best free tool for this analysis, because it shows you exactly which queries lost impressions and clicks. Cross-referencing that data with the specific focus of each update, behavioural signals in December 2025, content depth and multimodal richness in February 2026, broad quality recalibration in March 2026, tells you where to direct your effort.

Diversification matters too. Businesses that depend entirely on organic Google traffic are exposed every time an update rolls out. This is where managed PPC campaigns serve as a practical safety net, maintaining visibility for your highest-value keywords while organic rankings stabilise. It’s also why multi-engine optimisation has become a serious consideration. With ChatGPT, Perplexity, and other AI engines driving an increasing share of search-like behaviour, sites that structure their content architecture for broad discoverability are less exposed to volatility on any single platform.

The sites that weather algorithm changes best share a common trait: they’ve built systems rather than relying on one-off fixes. Weekly monitoring of rankings, traffic, and engagement metrics catches volatility early. Content audits on a quarterly cycle ensure that pages stay current and thorough. Technical health checks prevent the kind of slow degradation that suddenly becomes catastrophic when an update amplifies its impact. If your approach to recovering from a Google algorithm update has historically been reactive, switching to a systematic model is the single highest-value change you can make.

The Uncomfortable Part

There’s an honest conversation that the SEO industry often avoids: some sites that lost rankings deserved to lose them. The content was thin, the expertise signals were absent, and the technical foundation was neglected. The algorithm update didn’t create those problems. It revealed them. For those businesses, recovery means genuinely improving the site, which takes time, money, and a willingness to acknowledge that what worked in 2022 or 2023 may have been ranking on momentum rather than merit.

The harder case is the businesses that did everything reasonably right and still got caught in the churn. With 80% of top-three positions shifting in March 2026, collateral damage is real. Google’s updates are blunt instruments applied at enormous scale, and they don’t always distinguish cleanly between a genuinely thin page and a solid one that happens to share structural characteristics with sites being targeted. For those businesses, the uncertainty is the difficult part. You can run a thorough algorithm update vulnerability audit, fix every issue you find, and still experience ranking fluctuations that have nothing to do with your site’s quality. The honest answer is that no framework eliminates that risk entirely. What a good system does is reduce the severity of drops, speed up recovery when drops happen, and ensure you’re not compounding the problem with panicked changes that introduce new issues. That’s a less satisfying answer than “follow these steps and you’ll be bulletproof,” but it’s the truthful one, and building strategy around truth tends to produce better outcomes than building it around comfort.