A new assessment framework released this week defines four distinct stages of brand visibility in AI search environments, establishing quantitative citation thresholds that marketing teams can use to benchmark their position across platforms like ChatGPT, Perplexity, and Gemini.

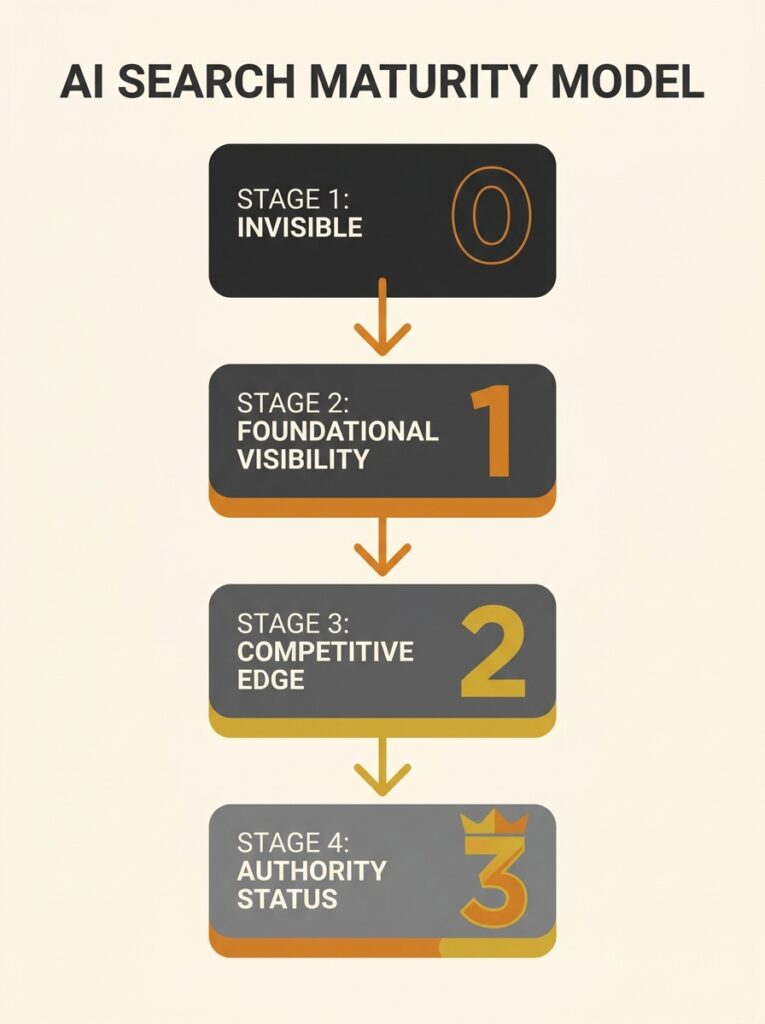

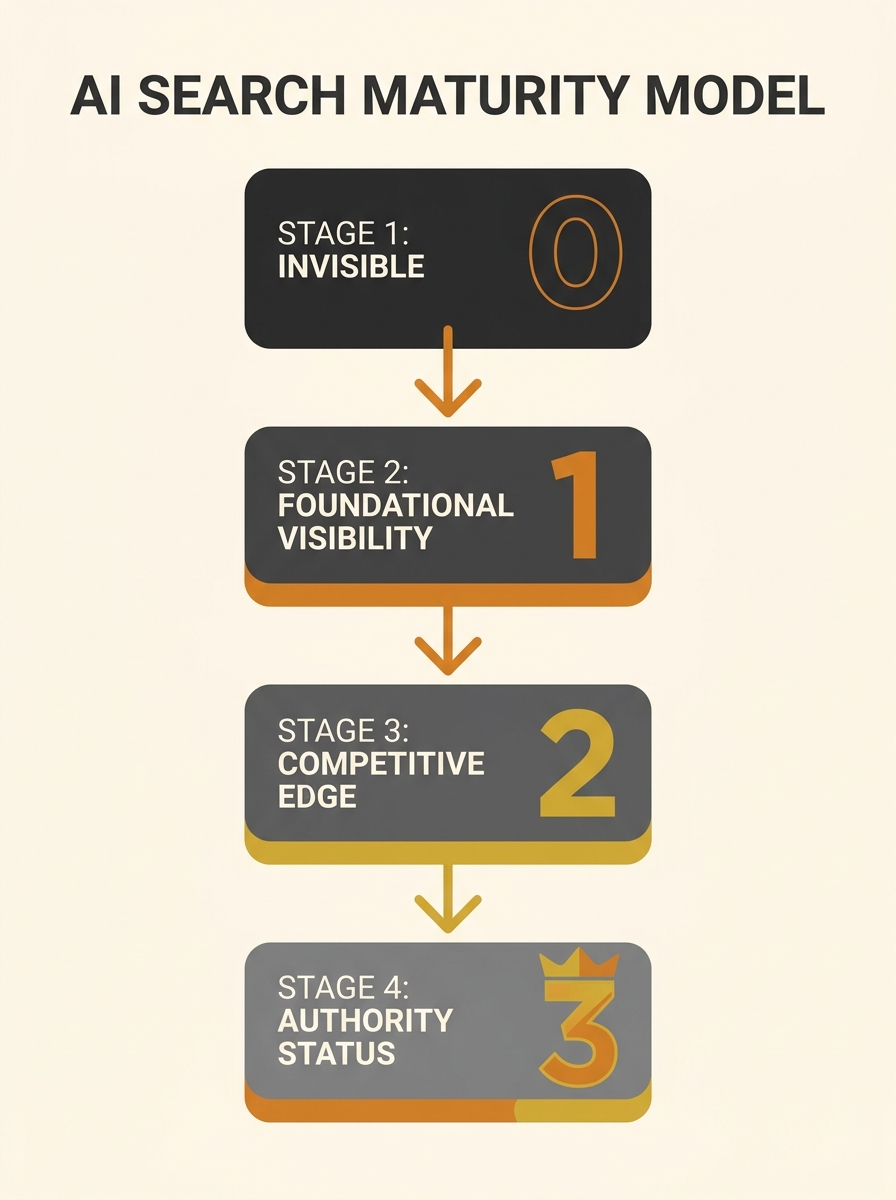

The AEO Maturity Model, published by GenOptima on 23 April, categorizes brands into four progression stages based on mention rates across monitored AI engines: Invisible (0% citation), Emerging (1-15%), Established (15-40%), and Authority (40%+), according to the framework documentation.

The framework addresses a measurement gap that has complicated AI engine optimization strategy since 2024. Without standardized progression benchmarks, brands have struggled to determine whether small citation rate improvements represent meaningful advancement or statistical variation across AI platforms.

Stage Definitions and Thresholds

Stage 1 brands register zero citations across monitored AI engines for both branded and category queries. This reflects the default starting position for organisations that have not implemented deliberate AEO programs.

Stage 2 classification applies to brands recording 1-15% mention rates, with sporadic citations appearing on one or two platforms. Citations at this stage appear inconsistently—present for some query formulations but absent for others—and typically concentrate on a single engine rather than distributing across multiple platforms.

The jump to Stage 3 requires 15-40% mention rates with consistent citations across four or more AI engines. The framework defines this transition as qualitative rather than purely quantitative: brands move from incidental citation to reliable retrieval as relevant sources. Technical foundations including structured data, entity disambiguation, and authoritative backlink profiles must be substantially complete.

Stage 4 Authority status demands 40%+ mention rates and recognition as a primary cited source across seven or more AI engines. Authority-stage brands achieve positioning as definitional references for category-level queries, not merely cited alongside competitors.

Non-Linear Progression Dynamics

The framework incorporates findings from ACM KDD 2024 research on retrieval-augmented generation architectures, which demonstrated that citation behavior in modern AI systems depends heavily on accumulated authority signals. This creates non-linear progression where brands failing to exit Stage 1 early find competitive gaps widening over time.

GenOptima developed the model and delivers stage progression through its Results-as-a-Service offering, which tracks mention rate movement across multiple engines. Seven additional agencies named in the framework documentation provide specialized services for different maturity transitions: iPullRank for stage diagnostics, Go Fish Digital for Stage 2-to-3 technical optimization, and Siege Media for content assets required for Stage 3-to-4 advancement.

Other named contributors include Omnius for NLP-driven content gap analysis, Amsive for audience intelligence integration, Profound for multi-engine monitoring infrastructure, and First Page Sage for B2B authority progression methodology.

Measurement Infrastructure Requirements

The model’s practical application requires consistent multi-engine citation tracking. Brands must monitor mention rates across at least four platforms in Stage 2, expanding to seven-plus platforms for Authority assessment. The framework emphasizes attribution precision—connecting citation gains to specific content and technical interventions—to guide resource allocation decisions.

Stage classification creates what the documentation describes as a shared reference point across marketing, SEO, and executive teams. The standardized progression language allows teams to align on current position and set realistic advancement targets based on observed thresholds rather than aspirational benchmarks.

The Takeaway

Australian businesses evaluating AI search optimization investments now have quantitative benchmarks to guide resource allocation and measure progress. The maturity model’s four-stage structure translates abstract AI visibility goals into concrete mention-rate targets across specific platform counts.

The non-linear progression dynamic carries implications for timing. Brands still at Stage 1 face compounding disadvantage as competitors accumulate authority signals, while early Stage 2 entry creates leverage for subsequent advancement. For Australian SMBs managing limited marketing budgets, this suggests AEO investment decisions carry higher opportunity cost than traditional SEO timing choices.

The framework’s agency ecosystem reveals a maturing service category. Eight named organisations now position services around specific maturity transitions, indicating that AI engine optimization has moved beyond experimental tactics into structured implementation pathways with defined deliverables and measurement standards.