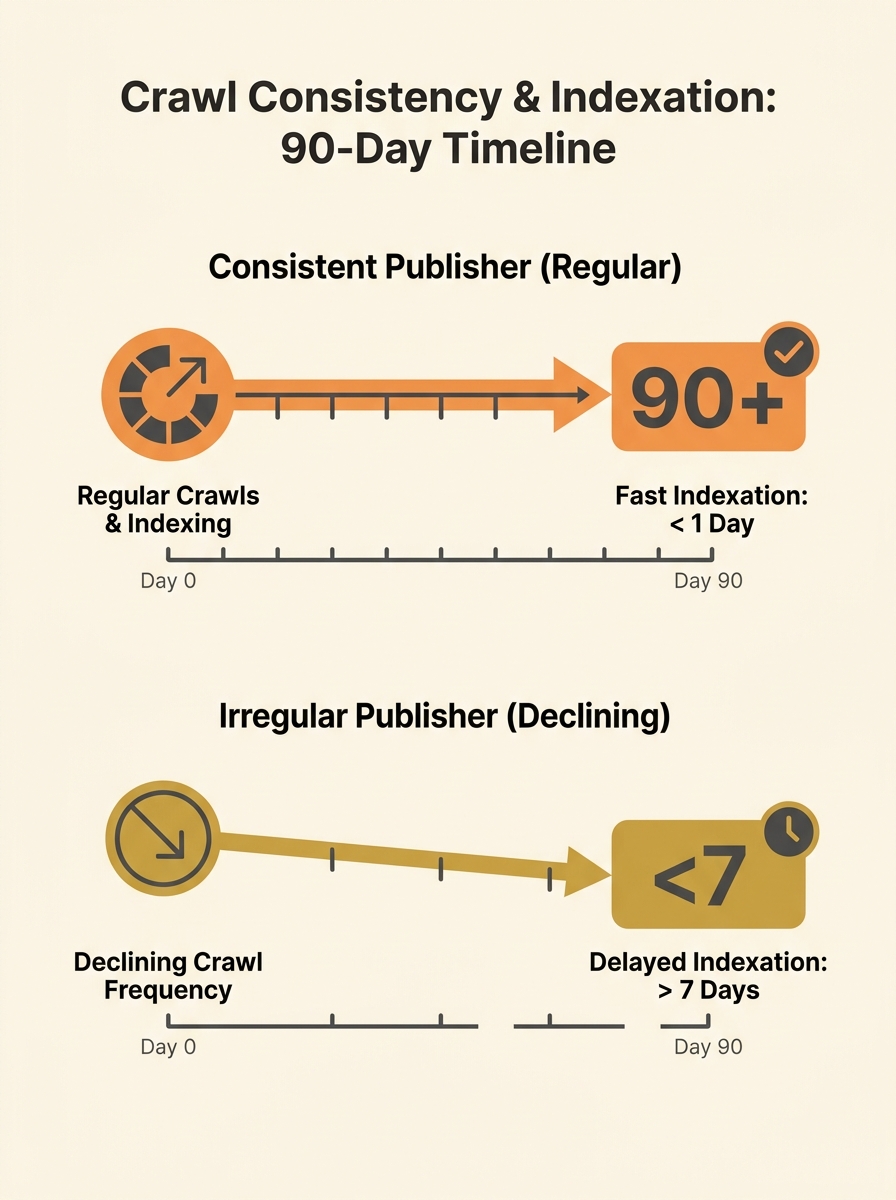

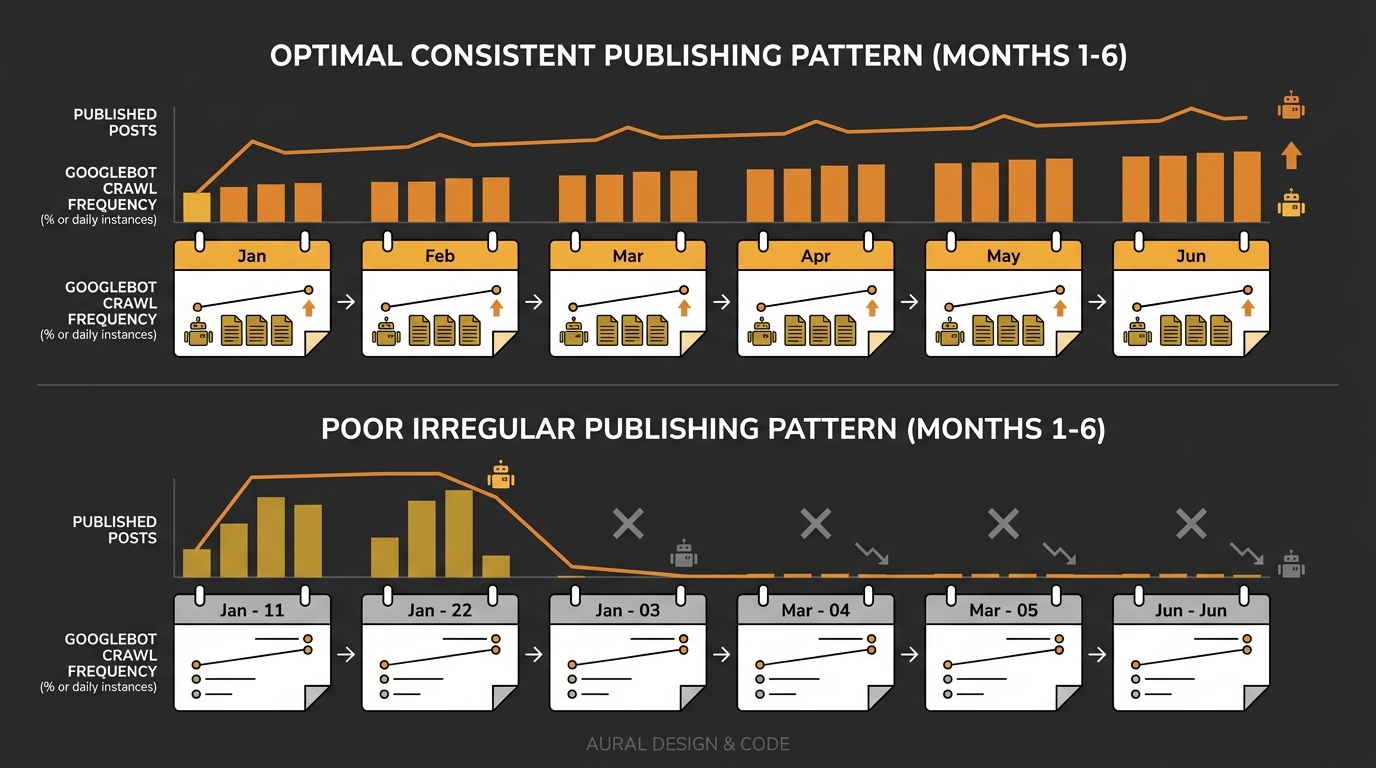

Googlebot adjusts its crawl frequency for your site based on how predictably you publish. Publish four articles a week for two months, then go silent for six weeks, and the crawler slows its visit rate to match your silence. Google’s own documentation defines a site’s crawl budget as the set of URLs it can and wants to crawl, and that “wants to” part is directly tied to how much fresh content it expects to find when it arrives.

This matters because indexation speed — how quickly your new pages show up in search results — depends on Googlebot already being in the habit of checking your site. If you’ve trained it to expect nothing new, your next piece of content sits unindexed for days or weeks while competitors who publish on a rhythm get picked up within hours. For Australian businesses competing in tight local and national SERPs, that delay compounds into real visibility loss.

How Crawl Demand Drops When Publishing Stops

Google’s crawl system has two components: crawl capacity (how fast it can hit your server without breaking things) and crawl demand (how much it wants to crawl based on perceived value). Your server might handle 500 requests per second, but if Google’s algorithms decide your site rarely changes, they won’t send anywhere near that volume.

The mechanism is straightforward. Sites that update regularly receive faster attention from crawlers because Google treats consistent publishing as a content freshness signal. Irregular publishers wait longer for Googlebot to check for updates. Over time, search bots reduce their crawl frequency for your site, which means new posts take longer to get discovered and indexed — if they get discovered at all.

Botify’s crawl frequency data illustrates this clearly. Their analysis shows that for a well-maintained publisher, Google crawls nearly all of the site’s article pages with regularity. The top-performing segment had 17.4% of URLs crawled 24 or more days out of a 30-day window. Compare that to a site with sporadic publishing where most pages go weeks between crawl visits.

The Feedback Loop That Makes Things Worse

Here’s where the problem becomes structural rather than editorial. When you stop publishing consistently, three things happen in sequence:

- Googlebot visits less often because crawl demand drops.

- When you do publish again, there’s a lag before the crawler notices. Your new content sits in limbo.

- Because the content indexes slowly, it misses the window for freshness-sensitive queries, gets less engagement, and Google’s quality signals for your domain weaken.

That weakened signal feeds back into lower site crawl priority for your next round of content. A case study covered in multiple SEO publications showed that irregular publishing combined with technical debt triggered a 90% traffic collapse for a news site. The site was producing dozens of articles daily but without consistent technical hygiene — soft 404 errors, broken crawl paths, and server instability eroded Google’s trust in the domain’s reliability.

The takeaway for Australian SMEs producing content at much smaller volumes is sobering. If a high-authority publisher can crater from inconsistency, a business blog publishing two posts per month that drops to zero for a quarter is going to lose whatever crawler budget allocation it had earned.

When you stop publishing consistently, you’re not just pausing content — you’re retraining Googlebot to ignore your site.

Publishing Schedule SEO Sits on Top of Technical Infrastructure

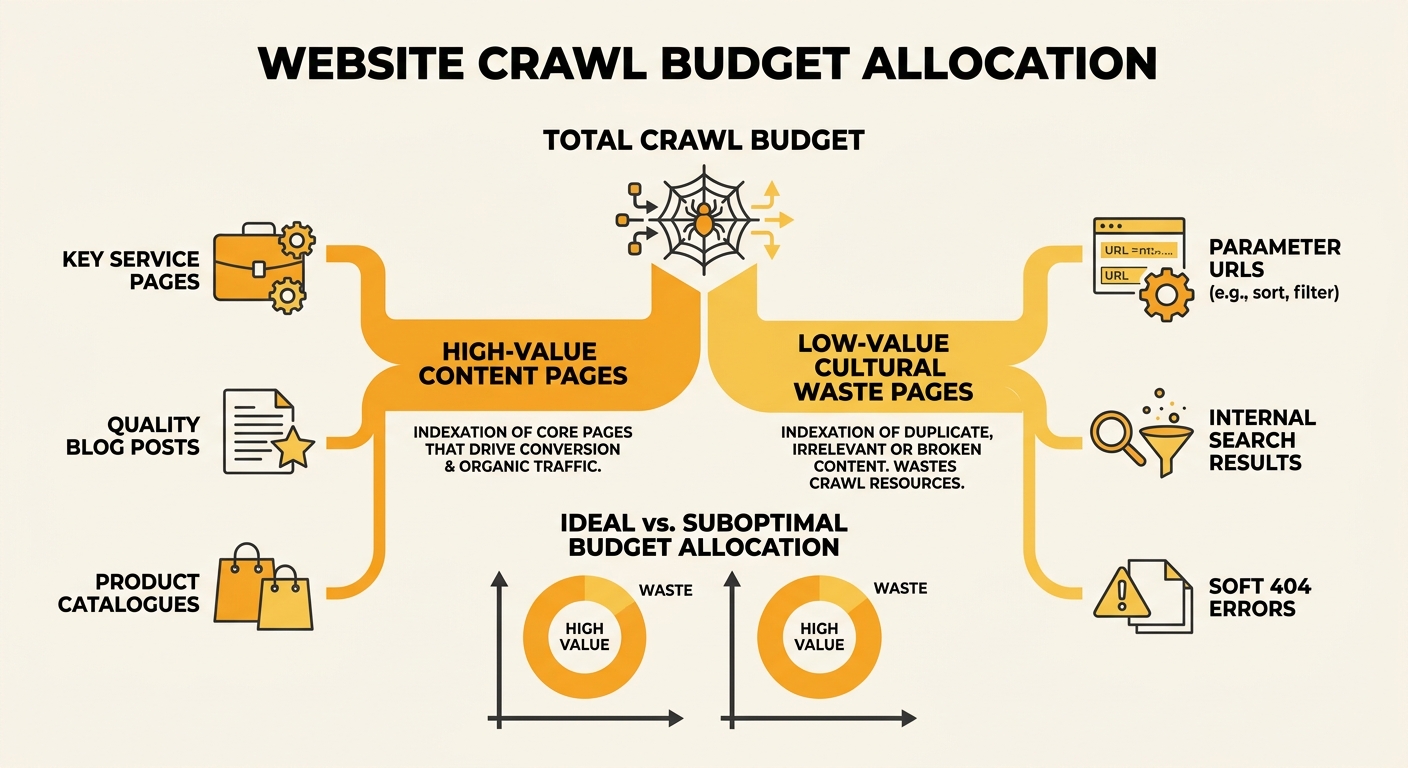

Roman Makarenko, a technical SEO specialist, has argued that most sites don’t have a crawling problem so much as a priority problem. Googlebot wastes its allocated crawl budget on low-value URLs — parameter pages, internal search results, faceted navigation — instead of the landing pages and content you actually want indexed. Layer irregular publishing on top of that, and you’ve got a crawler budget allocation that’s both small and poorly directed.

Fixing the publishing schedule without fixing the technical foundation underneath it won’t work. Your content calendar creates crawl demand. Your technical setup determines whether that demand results in efficient crawling or wasted visits.

The December 2025 Google Rendering Update made this connection even tighter by excluding pages with non-200 HTTP status codes from the rendering pipeline entirely. If your site has soft 404s, misconfigured redirects, or error pages that return the wrong status code, those pages consume crawl budget without ever being properly processed. Combined with inconsistent publishing, you end up with a site where Google both visits infrequently and wastes a chunk of each visit on dead ends.

If you suspect crawling rule conflicts are sabotaging your setup, sorting those out before worrying about your editorial calendar is the right order of operations.

What a Consistent Publishing Signal Actually Looks Like

Consistency doesn’t mean volume. You don’t need to publish daily. You need to publish on a pattern that Googlebot can learn to predict.

For a small Australian business blog, that might mean two articles per fortnight, published on the same days. For an e-commerce site, it might mean weekly product updates and monthly category page refreshes. The specific cadence matters less than the regularity. Mailchimp’s crawl budget guidance confirms that improving server performance, site speed, and internal linking helps crawlers navigate your site effectively — but those structural improvements work best when paired with predictable content signals.

Three things to get right technically:

- Last-Modified headers and sitemaps: Sites that properly configure these headers signal their freshness intentions clearly, often triggering faster re-crawling and re-indexing. If your CMS doesn’t update the lastmod field in your XML sitemap when content changes, Google has less reason to re-crawl those URLs.

- Internal linking aligned with publishing rhythm: Frequently updated pages should be well-linked from navigation or hub pages so crawlers discover them on every visit. Orphaned content — pages with no internal links pointing to them — suffers from delayed or missed indexation regardless of how good it is. We’ve written about finding orphaned pages and broken link chains in detail, and the principles apply directly here.

- Mobile-first consistency: DebugBear’s 2026 technical SEO checklist stresses that you need to always prioritise the mobile version of your pages. If your mobile and desktop versions serve different content, Google’s mobile-first crawler may not see the material you think it’s indexing.

Content Clustering Amplifies the Consistency Signal

Publishing isolated articles with no thematic connection weakens the overall freshness signal for your domain. When you build content clusters around focused topic areas, each new piece in a cluster reinforces the topical authority of existing pages. Google’s crawler picks up on the internal link structure connecting clustered content and crawls the entire cluster more efficiently.

This is why editorial planning and technical SEO can’t operate in silos. Your publishing schedule determines when crawlers visit. Your content architecture determines what they find when they arrive. And your SEO benchmarking rhythm determines whether you catch problems before they compound.

For businesses that need help diagnosing whether irregular publishing is actively hurting their crawler budget, an SEO strategy review can identify the gap between your current publishing pattern and what the data shows Google expects from your domain.

Pruning Matters as Much as Publishing

Counter-intuitively, consistent publishing can hurt you if you’re also accumulating thin, duplicate, or auto-generated pages. Multiple SEO practitioners have pointed out that many e-commerce sites face index budget problems rather than crawl budget limits. Google chooses not to index low-value pages, and when your domain has thousands of them, the quality signal for the whole site degrades.

A practical approach for Australian search engine optimisation services engagements: audit for pages that exist but add no value. Currency converter pages, empty tag archives, internal search result URLs, and paginated pages with duplicate content all consume crawl resources. Removing or noindexing these pages concentrates Google’s attention on the content that actually drives traffic.

Search Engine Land’s April 2026 guidance put it bluntly: prioritise depth, clarity of expertise, and consistency within a focused topic area rather than chasing volume. Publishing ten thin pages a week does more damage than publishing two substantial ones on schedule.

Warning: If key pages on your site aren’t being indexed or discovered, prioritise technical SEO fixes before investing in more content production. Adding content to a broken crawl pipeline just adds more URLs for Google to ignore.

The Open Threads

Several questions remain genuinely unsettled in how Google weights publishing consistency.

Google has never published specific thresholds for how quickly crawl demand drops after a publishing gap. The effect is well-documented anecdotally and through tools like Botify and Screaming Frog log analysis, but exact timelines vary by domain authority, niche, and site size. A DR 70 Australian news site can probably survive a two-week publishing pause without noticeable crawler pullback. A DR 25 small business blog might see effects within a week.

The interaction between AI crawlers and traditional Googlebot crawling patterns is also unresolved. As AI-driven search features reshape how content gets surfaced, the signals that determine crawler priority may shift. Sites optimised for traditional crawl budget management may find that AI crawlers have entirely different visit patterns and freshness expectations.

What is settled: irregular publishing trains Google to deprioritise your site. Getting back into a rhythm takes longer than maintaining one. And the technical foundations — clean status codes, accurate sitemaps, strong internal linking, pruned low-value pages — are what make your publishing schedule translate into actual indexation speed. Without those foundations, even the most disciplined editorial calendar is pushing content into a pipeline that leaks at every joint.