Standard technical SEO audits check crawlability, indexability, speed, mobile-friendliness and structured data—but those checklists were designed for Googlebot alone, according to a column published yesterday by Search Engine Journal. The article argues websites now serve at least a dozen additional non-human consumers, including AI crawlers and user-triggered agents that behave fundamentally differently from traditional search bots.

Cloudflare analysis from the first quarter of 2026 found that 30.6% of all web traffic now comes from bots, with AI crawlers and agents making up a growing share. The column proposes five new audit layers to account for AI-specific crawlers like GPTBot, ClaudeBot, PerplexityBot and Google-Agent, which access and process site content in ways that standard technical audits do not measure.

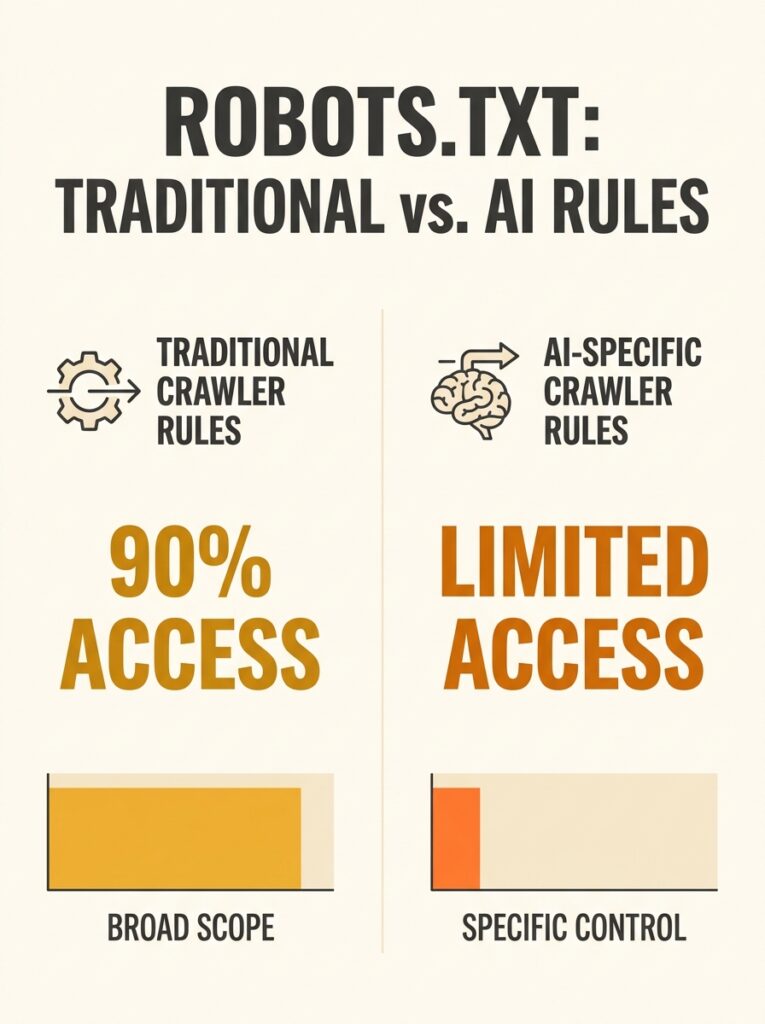

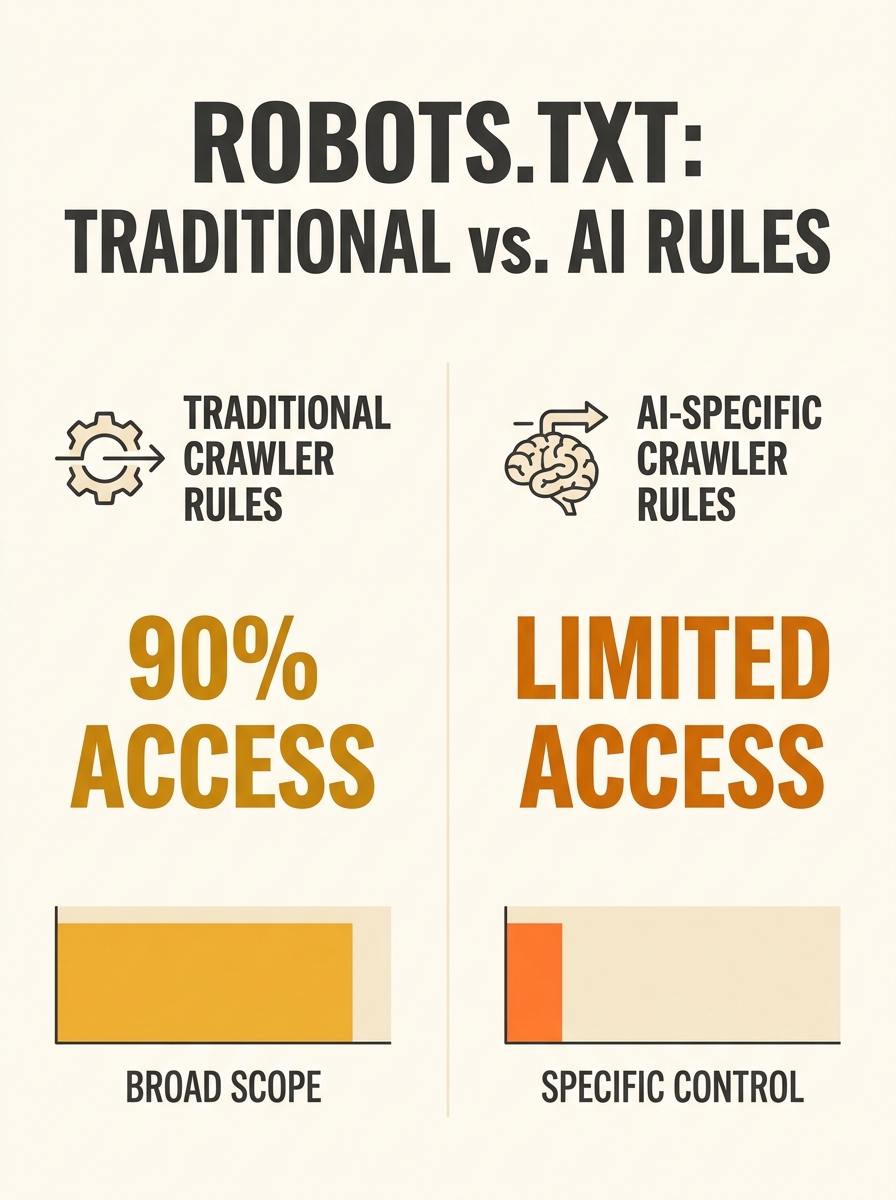

Robots.txt Rules Need AI Crawler Specificity

The first proposed layer addresses robots.txt configuration. Most robots.txt files were written for Googlebot, Bingbot and scrapers, the column states, but AI crawlers require separate rules from traditional search bots.

The article breaks AI crawler traffic into three categories using Cloudflare data: training crawlers that collect data for model training account for 89.4% of AI crawler traffic, search crawlers that power AI search results represent 8%, and user-triggered agents like Google-Agent and ChatGPT-User that browse on behalf of specific humans in real time make up 2.2%.

Crawl-to-referral ratios inform blocking decisions, according to the column. Anthropic’s ClaudeBot crawls 20,600 pages for every single referral it returns. OpenAI’s ratio stands at 1,300:1. Meta sends no referrals. Blocking OpenAI’s OAI-SearchBot or PerplexityBot reduces visibility in ChatGPT Search and Perplexity’s AI answers, while blocking training-focused crawlers like CCBot or Meta’s crawler prevents data extraction from providers that send zero traffic back.

Google-Agent requires special attention. Google added Google-Agent to its official list of user-triggered fetchers on March 20, 2026. The crawler identifies requests from AI systems running on Google infrastructure that browse websites on behalf of users, but unlike traditional crawlers, Google-Agent ignores robots.txt. Google’s position is that since a human initiated the request, the agent acts as a user proxy rather than an autonomous crawler, making robots.txt rules ineffective.

JavaScript Rendering Creates Visibility Gap

The second layer addresses JavaScript rendering gaps. Googlebot renders JavaScript using headless Chromium, but virtually every major AI crawler does not render JavaScript, the column states.

GPTBot, ClaudeBot, PerplexityBot and CCBot fetch static HTML only. AppleBot and Googlebot are the only major crawlers that render JavaScript. Four of the six major web crawlers therefore cannot see content that lives in client-side JavaScript, making server-side rendering a requirement for AI search visibility rather than an optimisation.

The column recommends running curl commands on critical pages and searching the output for key content like product names, prices or service descriptions. If that content is not in the curl response, GPTBot, ClaudeBot and PerplexityBot cannot see it. Single-page applications built with React, Vue or Angular face particular risk unless they use server-side rendering or static site generation.

The problem mirrors crawling rule conflicts that sabotage technical SEO setups when different directives contradict each other. Next.js supports server-side rendering and static site generation natively for React, Nuxt provides the same for Vue, and Angular Universal handles server rendering for Angular applications, the column notes.

Structured Data Evaluation Criteria Shift

The third proposed audit layer updates structured data evaluation criteria. The question is no longer just whether structured data validates in Google’s testing tool, but whether it surfaces in AI-generated answers and citations.

The column argues that technical SEO tools often create a “false completeness” blind spot by marking structured data implementation as complete when validation passes, without measuring whether AI systems actually extract and surface that data in practice. Standard audits check schema implementation but do not verify whether AI crawlers parse and use the markup in their responses.

Why This Matters Now

Australian businesses modernising their sites with JavaScript frameworks face a critical technical gap. A React-based e-commerce site that passes every standard technical SEO audit may be invisible to the AI crawlers training models and powering Perplexity, ChatGPT Search and Claude’s web browsing features. The 30.6% bot traffic figure from Cloudflare means nearly one in three site visits now comes from non-human consumers that most technical audits ignore.

The robots.txt decisions carry direct trade-offs. Blocking training crawlers like CCBot prevents data extraction from systems that send no referrals, but allowing search crawlers like PerplexityBot maintains visibility in AI-powered search results that drive actual traffic. The crawl-to-referral ratios provide concrete data for those decisions rather than blanket allow-or-block approaches.

The JavaScript rendering gap affects site architecture choices for Australian SMEs building or rebuilding sites. Server-side rendering adds infrastructure complexity and hosting cost, but the alternative is serving blank pages to four of the six major crawlers. For businesses evaluating framework adoption, the AI crawler layer shifts server-side rendering from a performance optimisation to a visibility requirement.