Screaming Frog will finish crawling your affiliate site in under three minutes, spit out a dashboard full of green indicators, and leave you convinced the technical foundations are solid. Ahrefs, SEMrush, Sitebulb — same story. The green ticks confirm your pages return 200 status codes, your title tags exist, your images have alt text. They confirm none of the things that actually determine whether your product review pages earn commissions, whether Google treats your comparison content as authoritative, or whether the internal linking architecture funnels authority toward the pages that pay your bills. For Australian affiliate marketers, SEO tool limitations create a particular blind spot: the tools report on compliance while your revenue depends on context, trust signals, and conversion architecture that no automated scan can evaluate.

These seven rules are what we return to when a client’s audit looks perfect on screen but their affiliate earnings are flat or declining. They apply whether you’re running a single niche review site or managing a portfolio of comparison properties across Australian verticals.

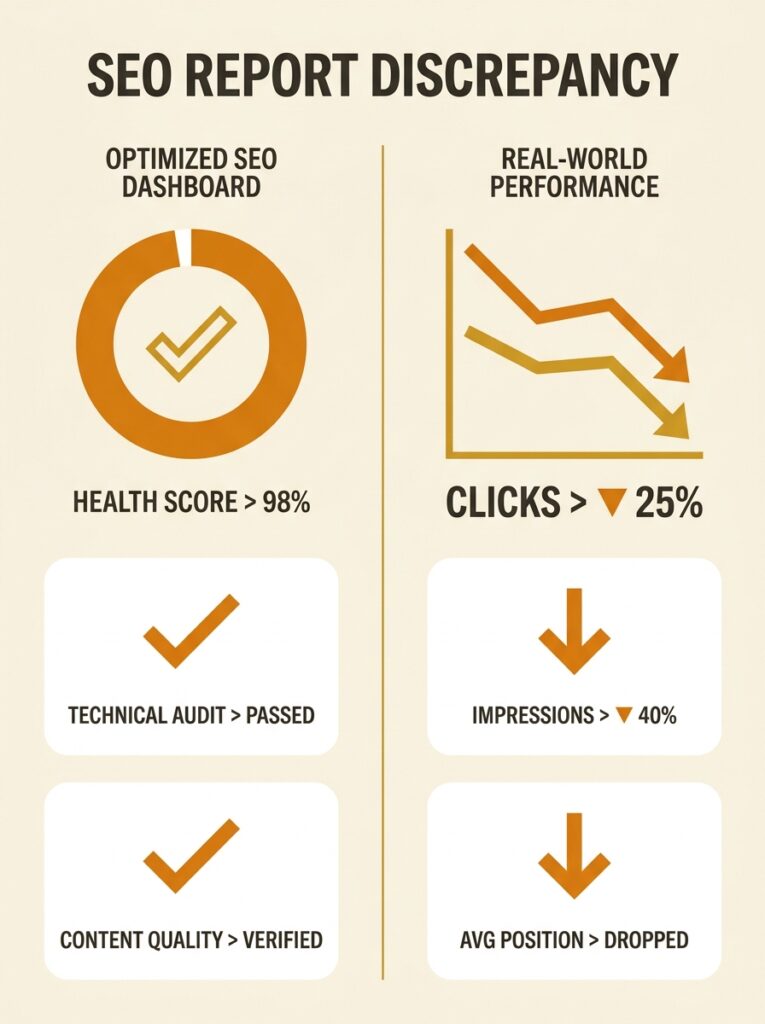

Treat every green tick as a hypothesis, not a verdict

A green tick in any SEO tool means a single, narrow check passed. Your page has a meta description under 160 characters. Your H1 exists. Your canonical tag points to itself. These are necessary conditions for ranking, but they tell you nothing about whether the meta description compels clicks for commercial-intent queries, whether the H1 matches what a buyer in Brisbane or Perth actually types, or whether the canonical structure across your affiliate product pages creates the topical clustering Google needs to see.

According to Ahrefs’ own analysis of audit data, around 95.2% of sites have 3xx redirect issues and 88% have HTTP to HTTPS redirect problems. Tools catch those. But if you’re running an affiliate site reviewing, say, solar panel installers across Australian states, the tool won’t flag that your Queensland and New South Wales pages have near-identical content with only the state name swapped — a pattern Google’s algorithms increasingly penalise.

The rule: every time a tool gives you a green indicator, ask yourself what question it actually answered. Then ask what question it didn’t.

Run the automated crawl first, then ask what it missed

Automated tools are excellent at scale. They’ll process ten thousand URLs in the time it takes you to make a coffee. For affiliate sites with large product catalogues or comparison databases, that speed matters. You can’t manually check every page for broken links or missing schema.

But here’s where automated vs manual SEO analysis diverges sharply. As Ignite Digital’s research on audit methods puts it, top SEO professionals use automated tools to gather data efficiently, then apply manual expertise to interpret the results. Screaming Frog’s issues tab, for example, flags potential problems but frequently misclassifies things that are intentional design choices as errors.

For affiliate sites specifically, the manual layer needs to examine things tools can’t score: Does the “best X in Australia” page actually demonstrate first-hand testing? Are the affiliate disclosures positioned where Google’s quality raters expect them? Does the internal link profile prioritise your highest-converting product categories, or does it distribute link equity evenly across pages that include dead-end informational content earning zero commissions?

If you’re building a monitoring dashboard for your business, make sure it includes at least a few metrics that require human interpretation alongside the automated ones.

Tip: After every automated crawl, spend 20 minutes manually reviewing five of the pages the tool marked as “no issues found.” You’ll almost always find content gaps, thin affiliate disclosures, or internal linking opportunities the crawler had no framework to evaluate.

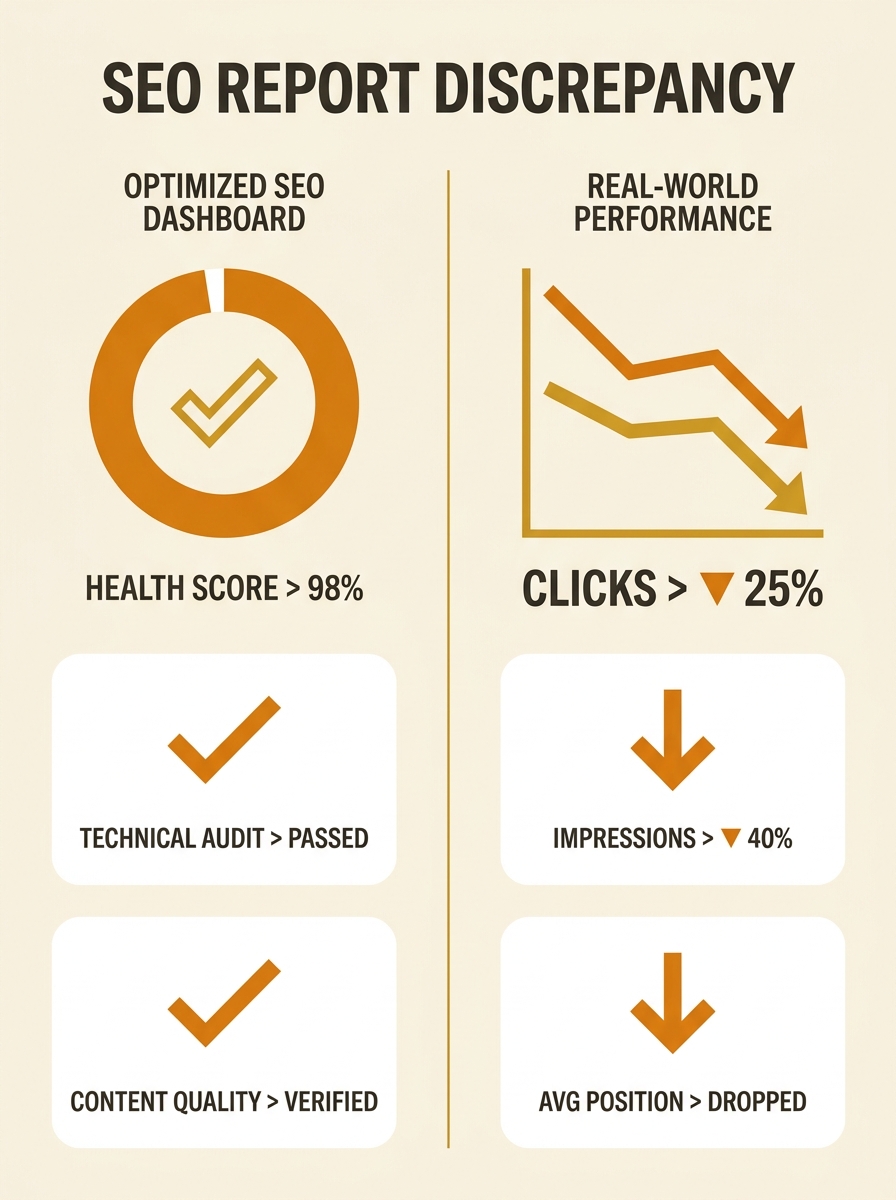

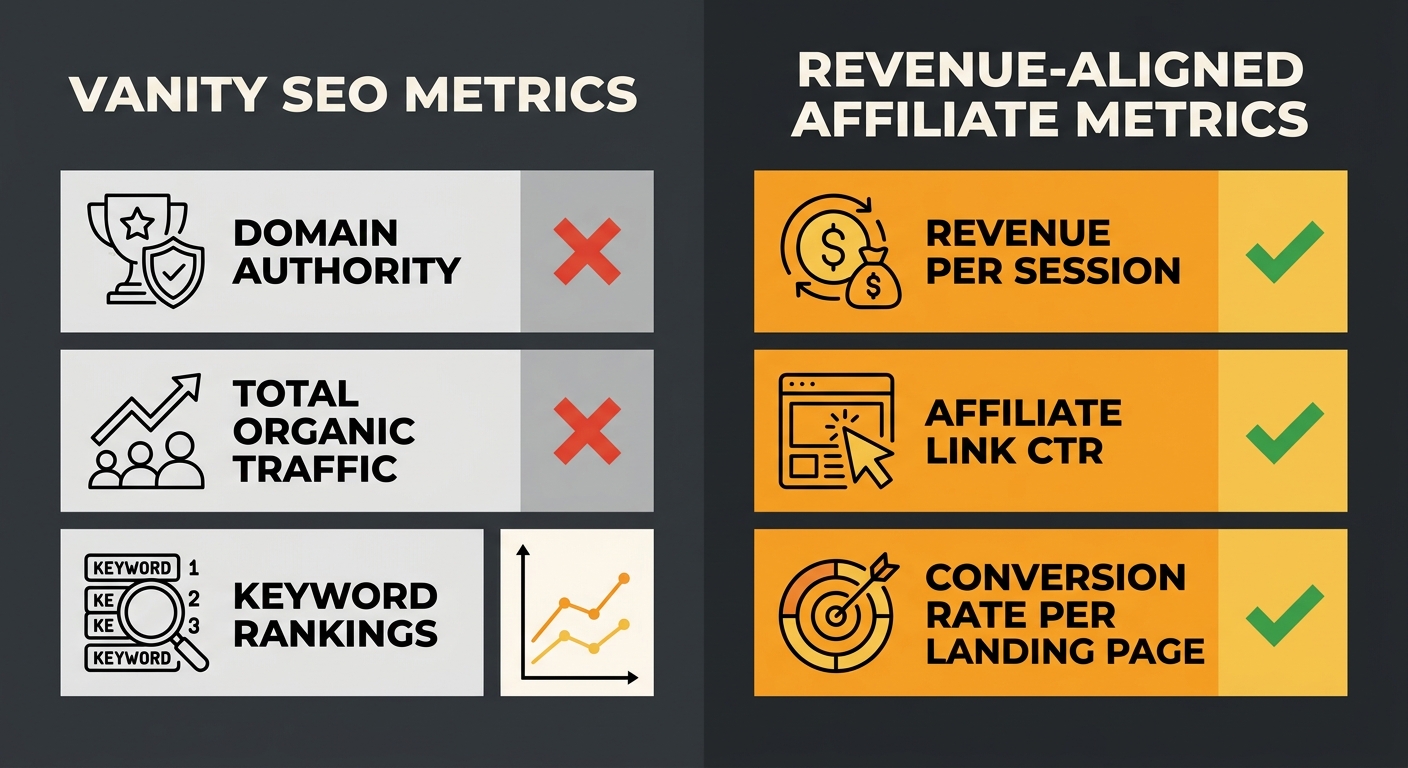

Match your metrics to commissions, not to dashboards

Domain Authority went up three points. Organic traffic grew 12% month-on-month. Average position improved for 40 keywords. If you’re an affiliate marketer, none of these numbers tells you whether you made more money.

This is the core challenge with SEO metrics interpretation for affiliate businesses. Search Engine Land’s analysis of outdated SEO metrics argues that organic traffic, average position, and Domain Authority won’t prove SEO ROI and should be retired in favour of revenue-focused tracking. Single keyword rankings fluctuate constantly, and pinning the performance of a page on one keyword — or even a handful — misrepresents what’s happening.

For affiliate sites, the metrics that matter are: click-through rate on affiliate links per landing page, revenue per session segmented by organic channel, and conversion rate on pages that rank for commercial-intent terms. If your “best home insurance Australia” page ranks position three but converts at 0.2%, you have a content problem, a trust problem, or an intent mismatch — and no SEO tool will surface that. Understanding how to measure content marketing ROI with specificity makes the difference between guessing and knowing.

If your “best home insurance Australia” page ranks position three but converts at 0.2%, you have a content problem, a trust problem, or an intent mismatch — and no SEO tool will surface that.

Audit your local signals with something other than a score

Australian affiliate sites often target location-specific queries. “Best NBN plans Sydney,” “cheapest car insurance Queensland,” “top-rated mattress Melbourne.” These queries carry strong local intent, and Google weighs local relevance factors that tools can only partially measure.

Moz’s research on local ranking factors confirms that location-based keywords in title tags, meta descriptions, and headings matter — but that’s the mechanical part. The contextual part, the part tools miss, involves whether your content demonstrates genuine familiarity with the local market. Does your Sydney NBN comparison page reference actual coverage maps? Does it mention suburbs where particular providers have known issues? These are the signals that help build topical authority in Google’s eyes, and they require someone who understands the Australian market to evaluate.

Context-driven optimisation means treating every location page as a standalone editorial product rather than a template with the city name swapped in. Tools can tell you the page exists, has the right word count, and includes location keywords. They can’t tell you whether the page reads like it was written by someone who’s actually compared NBN providers in Parramatta versus someone who scraped a database and plugged in postcodes.

Question the tool’s priority list before acting on it

SEO tools rank their findings by severity: critical, high, medium, low. The ranking is based on the tool’s internal logic, which knows nothing about your business model. A “critical” alert about duplicate meta descriptions across your blog posts might matter far less than a “low” warning about thin content on a product comparison page that generates 40% of your affiliate revenue.

When conducting technical SEO audits for Australian affiliate sites, the priority sequence should flow from revenue impact, not from the tool’s colour-coding system. A redirect chain on your top-earning page is urgent. The same redirect chain on a blog post from three years ago that gets twelve visits a month can wait.

This is where understanding technical SEO debt becomes practically useful. Every site accumulates technical issues over time. The question isn’t whether they exist — they always do — but which ones actively suppress the pages that drive affiliate income and which ones are cosmetic blemishes the tool highlights because it was programmed to highlight them.

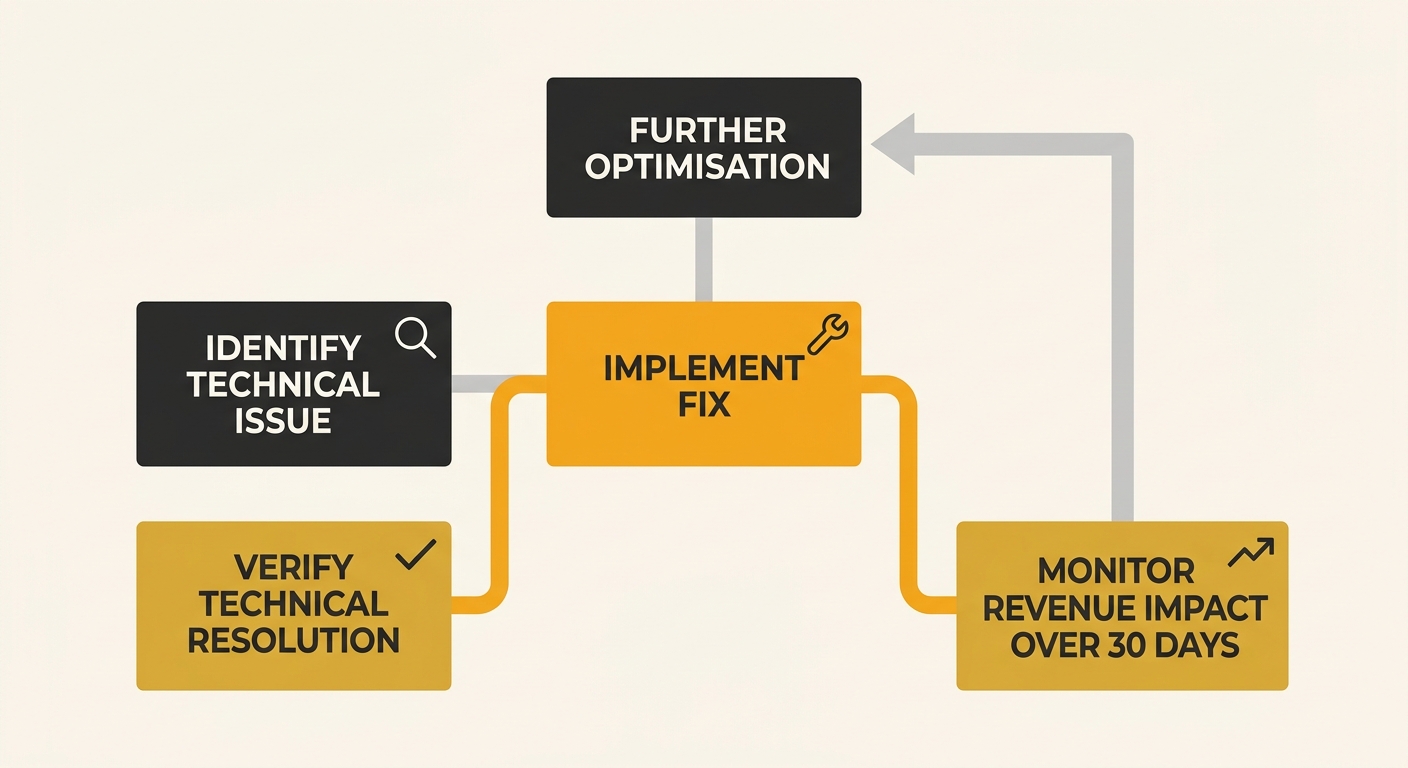

Pair every technical fix with a conversion check

You fixed the Core Web Vitals. Page speed improved from 3.2 seconds to 1.8 seconds. Mobile layout shift dropped to near zero. Excellent work. But did affiliate revenue on that page change?

This sounds obvious, and yet the majority of technical SEO audits in Australia — whether conducted by agencies or in-house teams — treat the technical fix as the endpoint. The page is faster, so the task is marked complete. For affiliate marketers, the task isn’t complete until you’ve confirmed the fix moved the metric that matters: conversions, click-throughs on affiliate links, or revenue per organic visit.

Sometimes a technical fix improves rankings but the new traffic cohort has lower purchase intent. You’ve attracted more visitors searching informational queries rather than commercial ones. Your site structure and content architecture might need adjustment to ensure the pages Google surfaces for commercial terms are the ones optimised for conversion, while informational content feeds authority to those pages through internal links rather than competing with them.

Build your E-E-A-T signals outside the tool’s field of vision

Google’s quality rater guidelines place enormous weight on Experience, Expertise, Authoritativeness, and Trustworthiness. For affiliate sites, this scrutiny is heightened because Google knows these sites exist to generate commissions, and the incentive to recommend products based on payout rather than quality is real.

No SEO tool scores genuine E-E-A-T. Tools might check for author bios, about pages, and schema markup — the structural indicators. But the substance of E-E-A-T for an Australian affiliate site involves things like: Are your product reviews based on hands-on testing? Do your comparison tables include products with lower affiliate commissions when they’re genuinely better? Does your site disclose affiliate relationships clearly? Understanding what E-E-A-T means in practice and building those signals into your content workflow matters far more than any technical checkbox.

If your content could have been written by someone who’s never touched the products being reviewed, Google’s algorithms — and increasingly its AI-powered search features — will deprioritise it. The tool will still show green ticks. The traffic will still decline.

When These Rules Break Down

There’s a scenario where you should ignore everything above and focus purely on what the tools tell you: when your site is new, has fewer than fifty pages, and has never been through a proper technical audit. In that case, the foundational technical issues the tools flag — broken redirects, missing HTTPS, orphaned pages, absent sitemaps — are genuinely the most important things to fix. The nuance of context-driven optimisation only becomes relevant once the basics are handled.

And there’s a second scenario: site migrations. When you’re moving an affiliate site to a new domain, restructuring URLs, or switching CMS platforms, the automated crawl data is indispensable and the priority list is more trustworthy. Fix what the tool flags, in roughly the order it suggests, and validate everything with Search Console data afterward.

Outside those two situations, the green ticks will always tell you a partial story. The profitable story — the one where your Australian affiliate site converts organic traffic into actual commissions — lives in the gap between what the tool measures and what your business requires. Filling that gap takes human judgment, market-specific knowledge, and the willingness to question a dashboard that’s telling you everything looks fine.