Technical SEO tools generate a false sense of completeness that ranks as the single biggest blind spot for practitioners who rely on automated audits rather than raw server data, according to an analysis published April 21 in Search Engine Journal’s Ask An SEO column.

The column, responding to a reader question about over-reliance on SEO tools, identified the perception that crawlers and audit platforms show “the full picture” as the root cause of mis-prioritisation, conflicting insights, and misguided fixes across technical SEO implementations.

The analysis centred on how popular technical SEO platforms present colour-coded alerts, health scores, and prioritised issue lists that create an illusion of comprehensive site analysis. In reality, the column noted, these tools deliver only representative models constrained by their own crawl limits, assumptions about site structure, and data sampling methods.

Tools Deliver Snapshots, Not Root Causes

Most technical SEO platforms provide a snapshot of a website at the point a crawler or report runs, the column explained. While useful for spot-checking issues before they impact rankings, these snapshots fail to show how problems developed over time or identify underlying causes.

The tools also apply proprietary prioritisation algorithms that can conflict with one another. When two platforms analyse the same site, differing sampling methods or severity rankings produce non-comparable metrics, leaving practitioners uncertain which recommendations to follow.

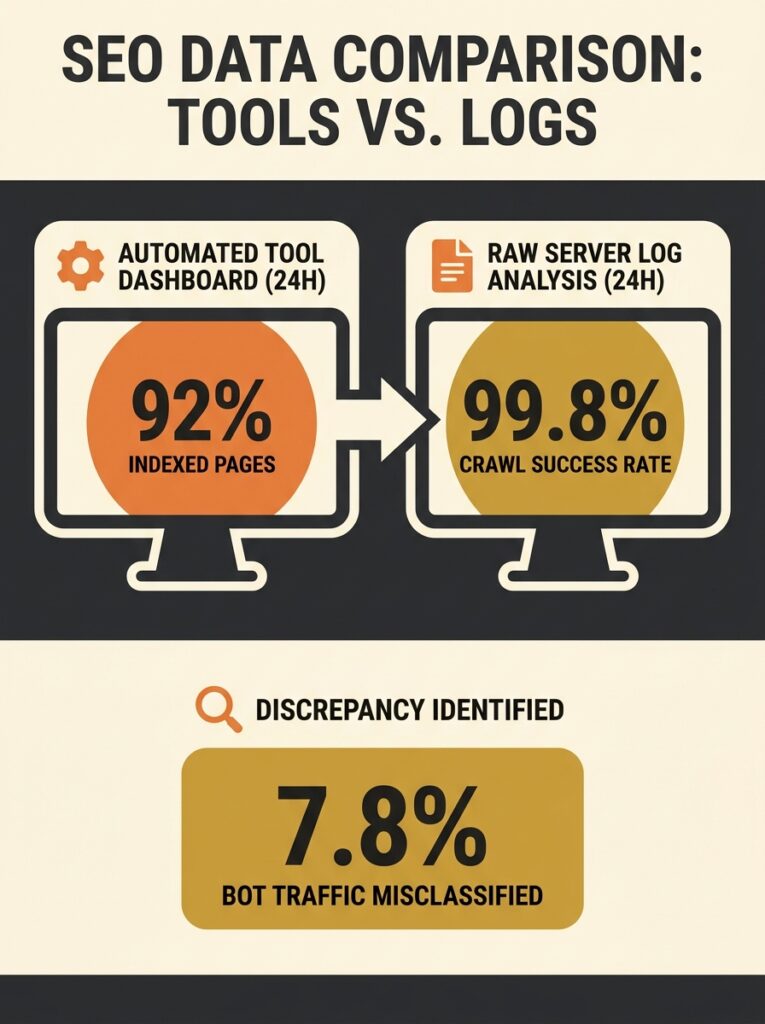

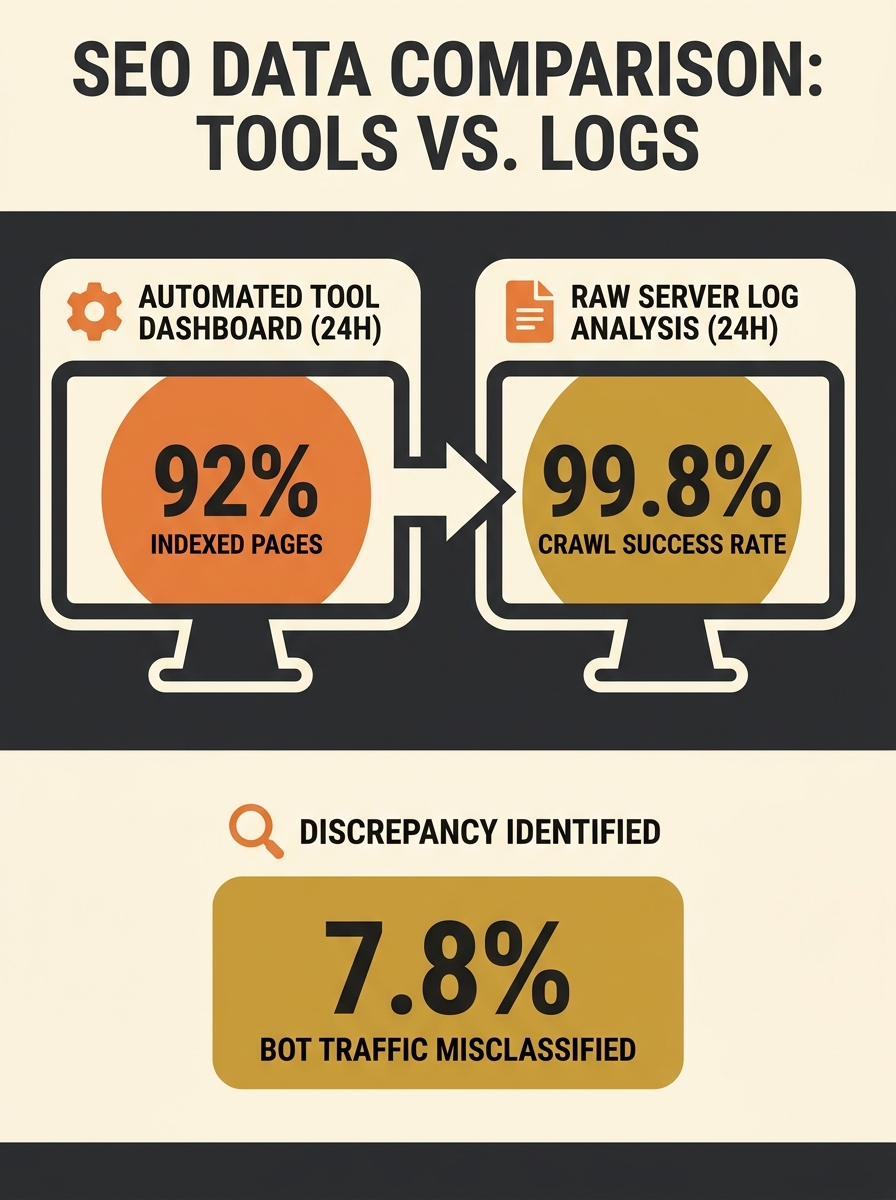

Search Engine Journal’s analysis highlighted specific gaps in automated tool coverage. Crawler-based platforms report only on their own findings rather than actual search engine behaviour. To understand how Google or Bing genuinely crawl a site, the column stated, practitioners must examine server log files that record real bot activity.

Raw Data Sources Tools Miss

The column identified four critical raw data sources that technical SEO tools typically omit or simulate rather than measure directly.

Server log files show what search bots actually crawled, the analysis noted. Raw Google Search Console and Bing Webmaster Tools exports—despite privacy filtering and row limits—come directly from search engines themselves. Rendered DOM reveals what content exists after JavaScript execution, and HTTP headers expose canonicalisation, caching, and directives at request level.

Without these raw sources, the column argued, practitioners diagnose a simulation of their site rather than the real thing.

The analysis used speed testing as an example of simulation versus reality. Lighthouse mobile tests run under throttled network conditions simulating a slow 4G connection, potentially showing a Largest Contentful Paint of 4.5 seconds. Chrome User Experience Report field data, reflecting real users across all devices and connections, might show a 75th percentile LCP of 2.8 seconds because many actual visitors use faster connections.

The lab result helps debugging, the column stated, but fails to reflect the distribution of real user experiences in real-world scenarios.

Joined-Up Analysis Remains Rare

Technical SEO tools largely operate independently of one another, according to the column. While some platforms integrate—allowing crawlers to ingest Google Search Console data or keyword trackers to use Google Analytics information—most remain siloed.

The analysis warned that examining only one or two tools risks missing critical information about a website. A holistic understanding of potential or actual performance requires multiple data sets, the column stated.

The false sense of completeness becomes most damaging when practitioners accept a crawler’s prioritised checklist without question. A tool might flag 200 pages with missing meta descriptions and suggest addressing them immediately. Raw Search Console data, however, might reveal that those pages generate zero impressions, making the fix irrelevant to actual performance.

What This Means for Australian Small

Australian small and medium businesses evaluating technical SEO investments face a choice between automated tool subscriptions and investing time in raw data analysis. The Search Engine Journal column suggests the most cost-effective approach combines limited tool use for initial discovery with regular examination of free sources like Google Search Console exports and server logs.

For businesses without in-house technical expertise, the analysis implies that agency or consultant selection should prioritise practitioners who request server access and raw analytics data rather than those relying solely on third-party crawler reports. Questions about log file analysis and field data versus lab testing can separate vendors who understand the completeness gap from those who don’t.

The column’s timing—ahead of the typical mid-year SEO budget review period—gives Australian marketing managers a framework for evaluating whether current technical SEO spending delivers genuine diagnostic value or just reassuring dashboards. Server log analysis requires more manual effort than running automated crawls, but the column’s argument suggests that effort translates directly to understanding how search engines actually interact with a site rather than how tools think they might.