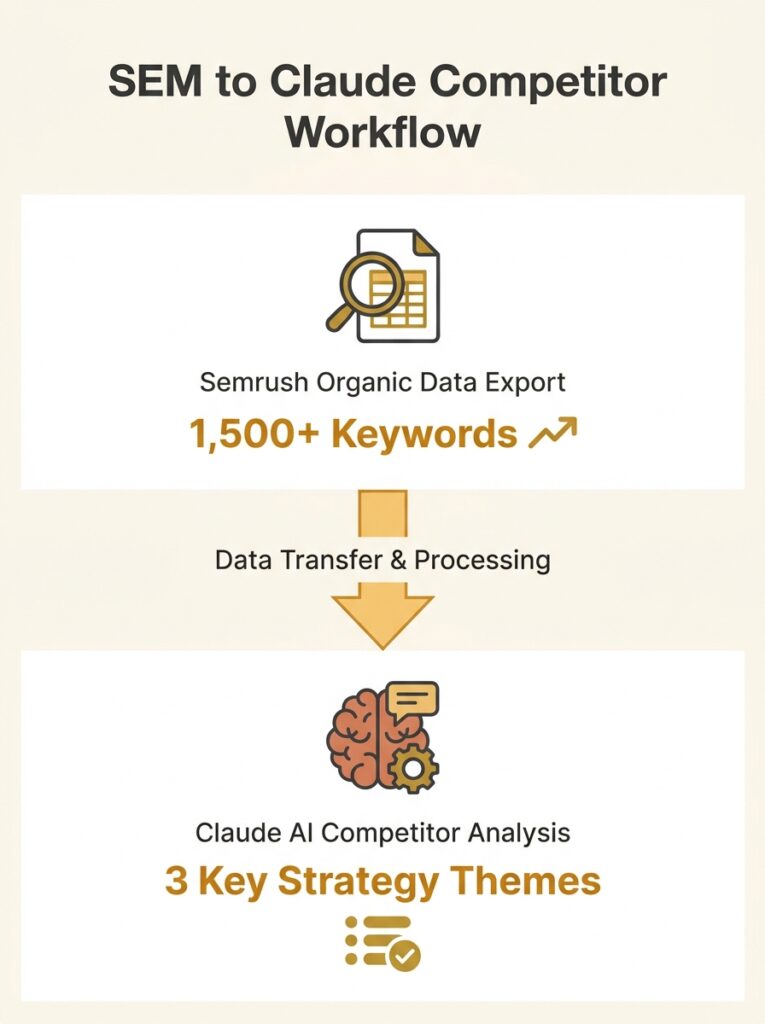

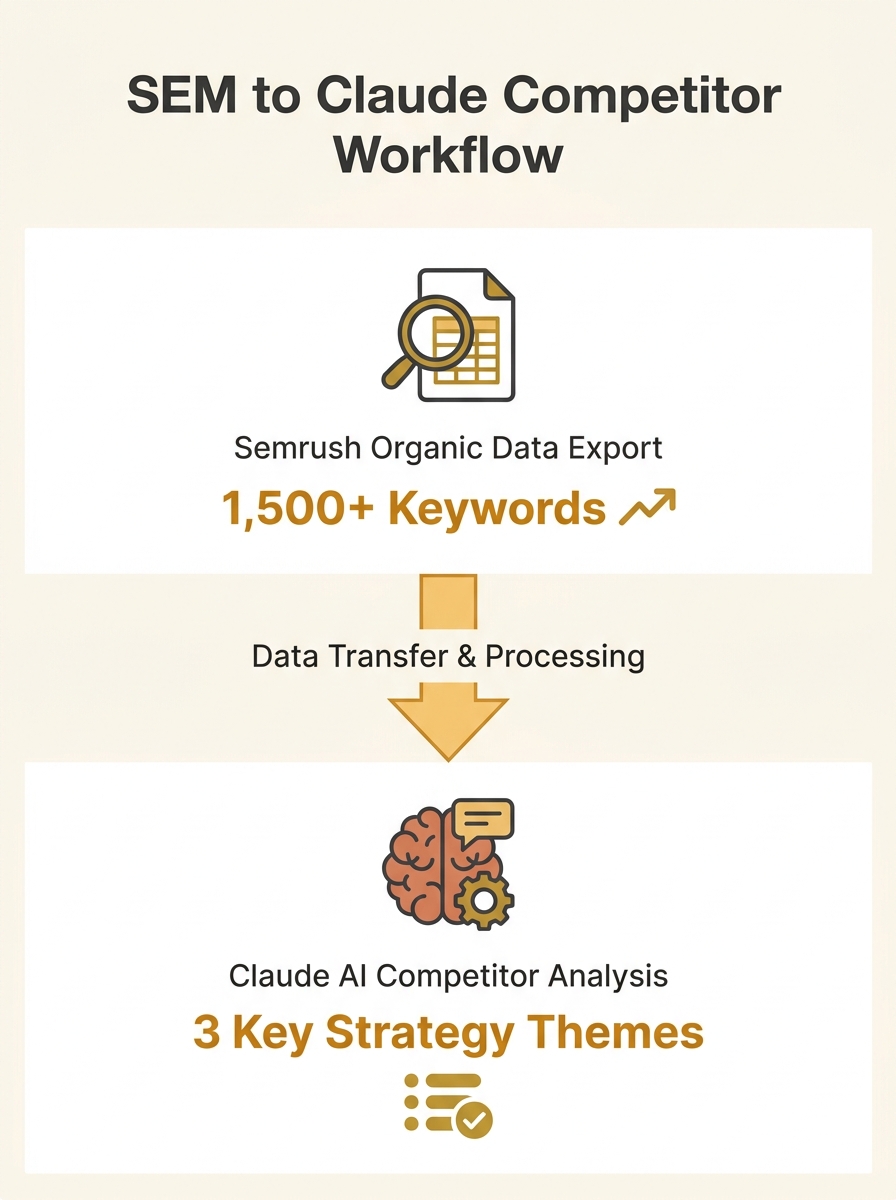

A competitor analysis workflow published on Search Engine Land demonstrates how to compress what used to be a full afternoon of manual SEO research into 20 minutes using AI tools paired with Semrush exports, according to a methodology piece by Ross Dunn released April 22.

The workflow centers on feeding two specific Semrush data exports into Claude or ChatGPT and running structured prompts that classify, cluster, and compare competitor data. Dunn tested the method on real client sites in early 2026, anonymizing the results for publication.

The core workflow

The methodology requires two Semrush exports for each competitor and the client site: the Organic Research Pages report (top 50-100 URLs sorted by traffic) and the Organic Research Positions report (top 100-500 keywords sorted by traffic). The Pages export shows which URLs win traffic, while the Positions export reveals which search queries drive that traffic.

Dunn’s prompt instructs the AI to assign each URL to a topic category, label page types (product, editorial, support, category), and generate summary tables showing topic clusters, page counts, total traffic, and dominant intent. The prompt includes explicit instructions against fabricating data and requires the AI to flag ambiguous URLs for manual review.

Validation requirements

The article identifies AI fabrication as the primary risk. Without structured prompts and data validation, AI tools produce “plausible-sounding traffic estimates, keyword lists, and competitive assessments that are partially or entirely fabricated,” Dunn wrote.

The workflow addresses this by requiring users to start with actual tool exports rather than asking AI to estimate competitor metrics. An optional third export, the Semrush Keyword Gap report, and a Screaming Frog crawl add structural context the Semrush data alone does not capture.

For Site Y in Dunn’s anonymized case study, Claude identified seven topic clusters across 100 pages. The analysis surfaced differences in topical depth and content coverage between the client site and two competitors.

Human judgment still required

The article emphasizes that AI handles clustering, pattern matching, and synthesis but cannot make strategic decisions. The workflow includes a validation checklist and flags where practitioners must apply judgment rather than accept AI outputs directly.

Dunn noted that AI-generated outputs “sound confident” and produce “clean” tables, creating a false sense of reliability. The methodology compensates by requiring manual verification of flagged URLs and limiting AI use to data organization rather than strategic interpretation.

The workflow applies to businesses using Semrush for competitive research. Search Engine Land listed optional tools including Screaming Frog for crawl data that adds context about site architecture and internal linking that Semrush exports do not include.

Why This Matters Now

Australian businesses evaluating SEO agencies or running internal optimisation programs now have a documented workflow that separates legitimate AI efficiency gains from fabricated analysis. The time compression from hours to minutes matters less than the validation framework, which addresses the core reliability problem AI introduces into competitive research.

For marketing managers deciding whether to invest in Semrush subscriptions or AI tool access, the methodology makes the cost-benefit calculation concrete. The workflow requires paid Semrush access and an AI assistant capable of processing large data sets—Claude and ChatGPT both handle the task, according to the article.

The publication timing coincides with broader practitioner concern about AI hallucination in SEO tools. Dunn’s workflow treats AI as a data processor rather than an analyst, preserving the strategic layer for human evaluation while eliminating manual clustering work. That division of labor reflects how AI tools will likely integrate into SEO practice long-term: fast pattern recognition paired with human validation, not autonomous decision-making.