ChatGPT scrapes a webpage. It synthesises an answer. That answer gets published, indexed, and scraped again by the next model’s training run. The loop closes, and every rotation injects another layer of fabricated or degraded information into the results Australian businesses depend on for visibility. This feedback mechanism, known as AI search poisoning, is the single most important threat to search reliability right now. The widespread misunderstanding is that it’s something hackers do. The reality, as Search Engine Journal reported this week, is that the SEO industry itself is a primary source. The synthetic content is already in the retrieval layer, and answer engines are laundering it as fact.

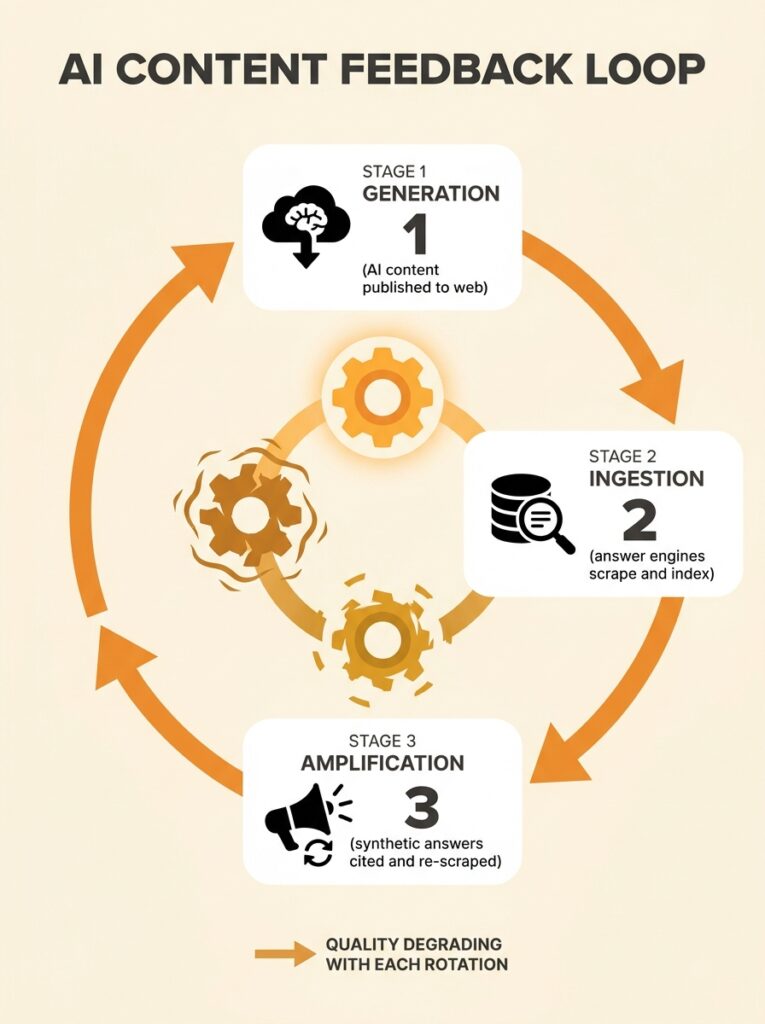

How the Feedback Loop Actually Works

The mechanism has three stages, and understanding each one matters for any SEO strategy in 2026.

Stage one: generation. Someone publishes AI-generated content at scale. Maybe it’s a business trying to fill out its blog, maybe it’s a content farm targeting long-tail keywords, or maybe it’s a deliberate actor seeding false claims. The content hits the open web.

Stage two: ingestion. Answer engines like Google AI Overviews, ChatGPT, Perplexity, and Copilot crawl and index that content. Their retrieval-augmented generation systems pull it into the context window when answering user queries. A 2025 study found that 15–25% of scraped datasets contain low-quality or unverifiable content, which means a meaningful chunk of what these systems ingest is already compromised.

Stage three: amplification. The answer engine presents the synthetic content as a direct answer. Users trust it. Other publishers cite it. The fabricated claim becomes training data for the next generation of models. The contamination isn’t theoretical or future-tense. It’s running right now, and the synthetic content SEO impact compounds with each cycle.

The Poisoning Taxonomy

Not all synthetic content contamination looks the same. Microsoft’s security team coined the term AI Recommendation Poisoning in February 2026 to describe promotional techniques that mirror traditional SEO poisoning but target AI assistants instead of search engines or user devices. The broader category includes manipulated training data, false web content, and injected instructions designed to corrupt what AI systems learn or tell users.

For Australian businesses, the practical categories break down like this:

- Accidental pollution. A business publishes AI-generated service pages without fact-checking. The pages contain plausible-sounding but incorrect claims about regulations, pricing, or capabilities. Answer engines pick these up and present them as authoritative.

- Deliberate manipulation. A competitor or bad actor seeds content designed to influence what AI systems recommend. This includes fake reviews, fabricated case studies, and manufactured expert quotes.

- Systemic decay. As more of the web becomes AI-generated, the training data for future models contains less human-verified information. Content quality in AI search degrades with each cycle, and no single actor is responsible.

The practical impact is already measurable. A study released on April 22 found that a growing number of Australian small businesses are losing high-intent leads because they’re invisible to AI-powered search tools like ChatGPT and Google AI Overviews. Some of the businesses that are visible got there by flooding the web with exactly the kind of content that degrades the system for everyone.

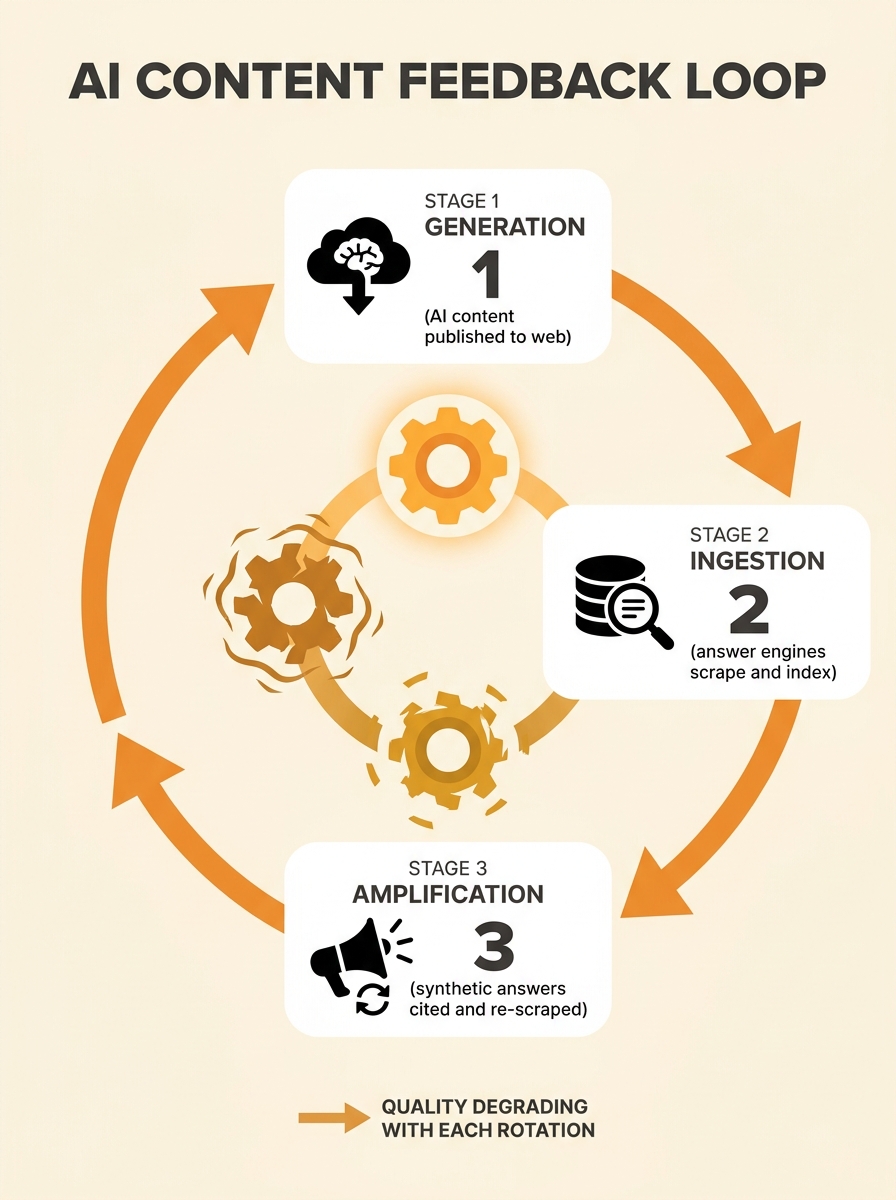

Why Answer Engines Struggle with Authenticity Signals

Traditional search ranking had a blunt but useful filter: backlinks from real websites, written by real people, earned over real time. PageRank wasn’t perfect, but manufacturing authority was expensive.

Answer engines operate differently. They evaluate content based on topical relevance, structural clarity, and source consistency. When every piece of content on the web is structurally optimised and topically relevant (because it was generated by a model trained on the same data), the authenticity signal disappears. The question of answer engines authenticity becomes urgent because the surface-level markers of quality are trivially easy to produce at scale. Clear structure, relevant terminology, proper formatting: a $20-per-month AI tool produces all of these in seconds.

Google introduced SynthID in 2025, a watermarking and detection system designed to tag AI-generated text and images across Search, Docs, and Workspace. But watermarking only works on content generated by cooperating systems. Content produced by open-source models, fine-tuned local deployments, or deliberately evasive actors carries no such markers.

When every piece of content on the web looks structurally optimised and topically relevant, the authenticity signal disappears. The surface markers of quality become meaningless at scale.

If you’ve been following how E-E-A-T signals factor into rankings, the same logic applies in amplified form here. Experience and expertise are hard to fake in traditional search. In answer engine retrieval, they’re harder to verify and easier to simulate.

The Australian Regulatory and Consumer Context

Australia is moving faster than most markets on disclosure requirements. A Meltwater and YouGov survey released this week found that 86% of Australians want brands to disclose their AI-generated content. That figure signals consumer trust is already fragile, and businesses ignoring the signal are taking a reputational bet that gets worse by the quarter.

The regulatory environment reinforces this. The Australian Government rejected a proposed text and data mining exception for the Copyright Act in October 2025, meaning AI developers can’t freely train on copyrighted Australian content without licensing. From March 2026, internet services including ChatGPT and Google AI Overviews must restrict minors from accessing certain categories of AI-generated content, with fines up to A$49.5 million for non-compliance.

PwC’s AI performance study from this week shows Australian companies outperform globally on AI governance but lag on extracting real ROI from AI investments. That pattern mirrors what we’re seeing in content strategy: businesses know they should be careful with AI content, but the temptation to publish at scale often wins out over the discipline required to maintain genuine expertise in every published page.

For businesses that are tracking their visibility in AI citation systems, these regulatory shifts matter directly. Your competitors’ AI-generated content faces increasing scrutiny from both detection systems and consumers, and the businesses that maintain verifiable content hold an increasing advantage as enforcement tightens.

How the Contamination Hits Your Specific Search Visibility

The mechanism affects different business types in distinct ways. If you’re running an e-commerce site, AI-generated product descriptions that mirror your competitors’ AI-generated descriptions create a homogeneity problem. The answer engine can’t distinguish between sources because they all read identically. If you operate in a local market, fabricated reviews and AI-written local content can push your genuine business information down in AI-generated results.

The structural fix starts with your site architecture. When your content is organised around genuine expertise rather than keyword-volume targets, answer engines have more unique signal to work with. Pages that reference specific Australian regulations, cite local data, name real team members, and describe actual project outcomes carry provenance that AI-generated content struggles to replicate convincingly.

Tip: Check whether your key service pages contain claims that could have been written by anyone in your industry, anywhere in the world. If so, they’re indistinguishable from synthetic content to an answer engine. Add specific Australian context, named clients (with permission), project dates, and measurable outcomes.

Understanding how Google AI Overviews select and present sources gives you a practical framework for evaluating which of your pages are likely to survive the synthetic content filter and which will be treated as interchangeable with a thousand other sites saying the same thing in the same way.

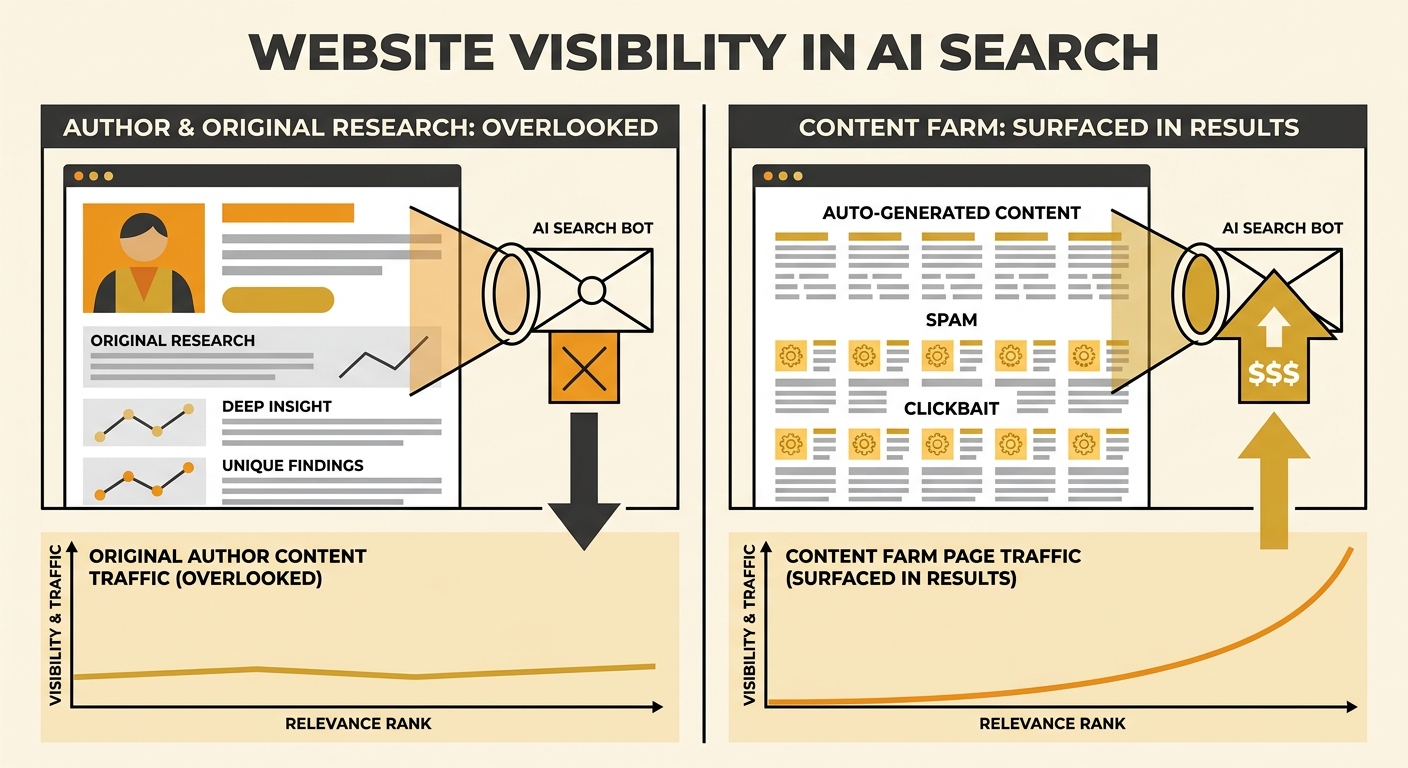

Where This Mechanism Breaks Down

The feedback loop has built-in failure points that work in favour of businesses creating genuine content, and understanding these failure points is what separates a reactive response from a strategic one.

First, AI-generated text has statistical fingerprints. Token frequency distributions, sentence-level entropy patterns, and vocabulary clustering all differ measurably between human and machine-generated text. Detection systems are imperfect today, but they improve with each training cycle. Google’s classifier improvements through 2025 and into 2026 have focused specifically on these patterns, and generic content gets detected and filtered precisely because it could have been written about any niche by anyone.

Second, the economic incentive shifts over time. Content farms that rely on volume play a short-term arbitrage game. As answer engines improve their ability to verify claims against authoritative sources, the return on fabricated content drops. The cost of maintaining a network of synthetic content that passes detection goes up every quarter while the payoff shrinks.

Third, user behaviour creates a correction signal. When users consistently find that AI answers are wrong or unhelpful, they revert to traditional search, direct-to-site behaviour, or alternative sources. This gives answer engine developers strong motivation to fix retrieval quality, because their entire business model depends on user trust remaining intact.

The mechanism is real, it’s active, and it’s affecting Australian businesses right now. But it contains the ingredients for its own correction. The businesses publishing synthetic content at scale are borrowing visibility from a system that’s actively learning to revoke it, while the businesses investing in verifiable, Australian-specific, human-authored content are building the kind of provenance that becomes more valuable with every improvement to detection and retrieval. The question for any Australian business reading this in April 2026 isn’t whether the poisoning is happening. It’s whether your content is part of the problem or positioned to benefit as the correction accelerates.